Bundle your custom apps in a Debian package

It's a Wrap

You've got a cloud. It's great. You can scale, and you've got redundancy. But you have about 20 scripts for a bunch of tasks (e.g., one for when an instance is booted up and another for when its IP changes) and these scripts aren't getting any shorter, they're getting better and longer. If you want to manage them in your favorite versioning software (which I hope is Git, but might be something else), how do you get that onto the new instances simply?

Enter the not-new-at-all technology of Debian packages. They are straightforward to use across any Debian-based Linux and simple to create, and they provide an ideal way of containing and releasing your scripts.

In this article, I'll show how to create Debian packages and how to install them (which you probably already know). And, I'll explain how the process will make you feel more comfortable about pushing changes live across your cloud. I talked to cloudy people about how to get code onto new instances, and I tried lots of different things, but the Debian package is such a solid, reliable format that I just had to share it.

Debian Packages

Debian packages are simply archives that are very easy to install, usually in one line. If you've ever worked with packages in Ubuntu or any other Debian-related Linux, you've probably needed to download a package from an online source and install it:

apt-get install <I>blarblar<I>.deb

On the inside, a Debian package is an archive of binary files, scripts, and any other resources an application needs, plus a handful of control files that the various command-line tools use to install the package.

Because this standard package format is so easy to install on any Debian-based Linux, it's a great way to get standard scripts and custom applications into the cloud. Often you need a few lines to configure a new instance or to connect the instance to the rest of your cloud, and storing all those scripts in a package keeps things very neat.

The parts of a Debian package I'm most interested in are the control files, which live in a directory called DEBIAN. Control files tell Debian all about what the package contains, what it's called, the version, and so on. The second part is the code, which I'll look at in detail later.

Creating the Package

To show this process at work, I'll create a simple package. Suppose I need a simple page that can sit on your server until an application is installed. Good practice dictates that your instances should fail nicely, so if you start 10 instances when your app gets 6 million tweets, you at least want them to deliver a nice page before they're ready to do business.

To begin,

- Create a directory called

myserverand the directories and files inside it:

./DEBIAN/ ./var/www/index.html

- Put the code shown in Listing 1 in

./DEBIAN/control. - Put the file shown in Listing 2 in

./var/www/index.html.

Listing 1: Control Files

Package: myserver Version: 0.0.1 Section: server Priority: optional Architecture: all Essential: no Installed-Size: 1024 Maintainer: Dan Frost [dan@3ev.com] Description: My scripts for running stuff in the cloud

Listing 2: Message Page

01 <html> 02 <head> 03 <title>We're getting there...</title> 04 </head> 05 <body> 06 <h1>Give us a moment.</h1> 07 <p>We're just getting some more machines plugged in ...</p> 08 </body> 09 </html>

If the path to the index.html file looks familiar, it's because the file structure inside your package mirrors exactly the structure in the target instance. If you want a file in /var/some/where/here, just create that path inside your package project.

Once you've created this amazing page, package it up with:

$ dpkg-deb --build myserver dpkg-deb: building package `myserver' in `myserver.deb'

When you look in the directory, you'll see a file called myserver.deb. Now that your project is all packaged up, you can install it. But, before you do, take a look at what's inside:

$ dpkg-deb --contents myserver.deb

then install:

$ sudo dpkg-deb -i myproject.deb

After running this command, you'll find the HTML file sitting there for when Apache starts (Listing 3):

Listing 3: HTML File

01 $ cat /var/www/index.html 02 <html> 03 <head> 04 <title>We're getting there ...</title> 05 </head> 06 ...

The next step is to create a skeleton set of scripts for a cloud provisioning service, such as Scalr or RightScale [1]. Indeed, the scripts I use for hosting and development servers all sit inside one Debian package.

A Cloudy Package

A tiny HTML file isn't all that useful in the cloud, so I'll look at something a bit more useful. Server configuration can be set from the Debian package simply by placing your preferred configuration file in the package:

./etc/apache2/conf.d/ <I>our-config<I>.conf

As long as Apache is configured to include this file (which, in Ubuntu, it often is), it will take effect right away. Although you might want to control this with tools such as Puppet after deployment, starting the instance with a good configuration will help keep the environment sane from the outset.

Cloud hosting becomes difficult when you use strange configurations – creating exceptions for some apps or generally working against the grain (e.g., using Tomcat's configuration style and Apache's config directories). Avoid customizing the environment too much because it will mean extra maintenance in the future and could limit how you can scale.

Another common script for cloud servers will run tasks at certain points in the instance's life cycle. For instance, a service such as Scalr will run scripts on various events, such as OnHostUp, OnHostInit, and OnIPAddressChanged. You can create some scripts for these events in your Debian package:

./usr/local/myserver/bin/on-host-up.sh ./usr/local/myserver/bin/on-ip- address-changed.sh

The first script should download an HTML file or a PHP file from either S3 or your existing repository and place it in the document root:

cd /var/www/ wget -O tmp.tgz http://mybucket.s3.amazonaws.com/website.tgz tar xzf tmp.tgz service apache2 restart

These scripts will be like any other script you would write, with one important difference: no more human interaction. Everything has to run automatically when new instances start up, and you really don't want your script waiting for a human that doesn't exist.

Next, package your project into a .deb file and place it somewhere public from which you can download. This might be where you host, but it is much better to put it somewhere resilient, like S3 [2].

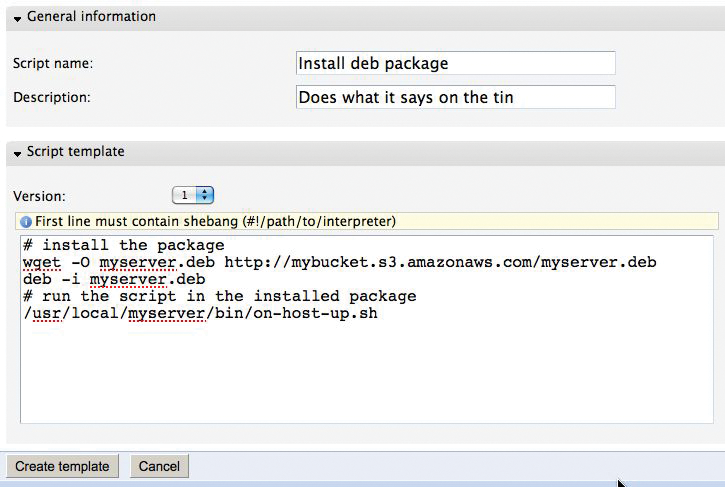

Next, log in to Scalr and add the following lines to a new script that will run on the event OnHostUp (Figure 1).

# install the package wget -O myserver.deb http://mybucket.s3.amazonaws.com/myserver.deb deb -i myserver.deb # run the script /usr/local/myserver/bin/on-host-up.sh

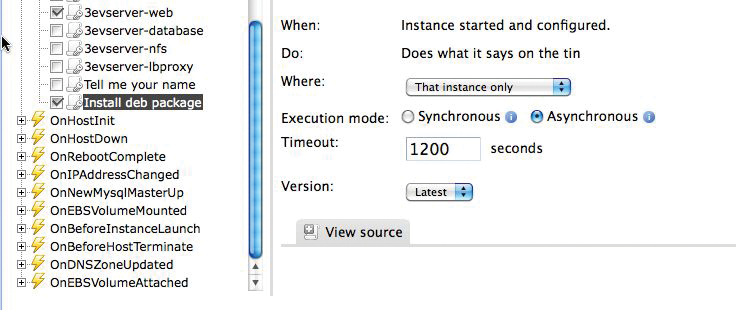

Save the Scalr script under the name of the event that you want to trigger it and go to the farm configuration. Now you can add your neatly organized scripts without having to edit scripts via a web interface (Figure 2). If you have a team working on your cloud hosting, you can even start using standard code management, such as Git or SVN, to version your cloud environment's bootup and configuration scripts.

A second event script, which is called each time the IP address changes, would typically update Dynamic DNS with a one-liner (you'll need to set up your Dynamic DNS account first):

curl 'http://www.dnsmadeeasy.com/servlet/updateip?username=myuser&password=mypassword&id=99999999&ip=123.231.123.231'

Once you've placed this code in the script on-ip-address-changed.sh, simply package it up into your .deb file, upload it to S3 again, and start a new instance. With this approach, testing small changes takes a little longer, but because the scripts are all in a .deb, you can test them more easily outside the cloud.

The Package in Production

Everything thus far might feel a bit heavy-handed. I put a lot of effort into getting a short script up onto a cloud instance. But suppose you have a running server farm, and you need to update some scripts across the farm.

Several cloud services let you edit scripts via a web interface, which is fine up to a point, but beyond a few lines, you will start pining for Emacs or your favorite editor. A custom .deb package makes it easy to create and test the script on local machines or a development cloud before uploading the final version to the production environment.

Installing the script on instances is simply a matter of deb -i myserver.deb, so you can install, run arbitrary tests, and repeatedly verify that your cloud scripts are stable before they hit the live environment. And you should!

When everything is stable, upload to S3, which you might want to script as well:

s3cmd put myserver.deb s3://mybucket/myserver.deb

Then, all you need is a corresponding script to run on your instances. Create a new script in Scalr or RightScale that downloads and installs the latest version:

wget -O myserver.deb http://mybucket.s3.amazonaws.com/myserver.deb deb -i myserver.deb

The ability to test server configuration in the cloud, for the cloud, is really important.

If you've been running nice chunky servers for years, you wouldn't make changes to them unless you were 100 percent sure, but with cloud computing, you can prototype your configurations and settle your nerves before putting things live.

When your cloud is running, you will want every opportunity to test the scripts, so being able to install, run, and test them on any instances is valuable.

After Installation: Uninstall

So far, Debian packages might just look like glorified tarballs, so why not just tar up your scripts? Well … they're better than that. Two hooks are provided: post-install and pre-uninstall. Once your Debian package's files have been copied to the filesystem, the post-install script, ./DEBIAN/postinst, is run, and when you uninstall, Debian removes your files before running ./DEBIAN/prerm.

With these scripts, you can install software, start services, and call a monitoring system to tell it exactly what's going on with the new instance.

For example, open ./DEBIAN/postinst and add something like:

curl http://my-monitor.example.com/?event=installed-apache&server= $SERVER_NAME

How you keep your monitoring systems informed depends on what you're running, but you can add arbitrary commands here to keep yourself happy. A more typical post-install task is sym-linking your scripts into the standard path:

ln -s /usr/local/myserver/bin/ on-host-up.sh/usr/bin/on-host-up.sh

Anything that gets your scripts working, such as starting any services that are provided or used by your scripts, should be done in the post-install script:

service apache2 start service my-monitor start

However, this is not where you install your web app's code, nor is it where you grab the latest data. Stick to getting the helper scripts running in the Debian hooks and installing your site from the scripts inside your package.

Remember that the key to cloud computing is scaling without friction. Your scripts must install themselves without the need for checking the OS afterward, so use the hooks to leave everything ready to go live.

Why It All Makes Sense

To finish, I'll look at a real-world example. Suppose you want to change your proxy from Apache to HAProxy, and you want your web servers to host some extra code because this makes your app more scalable. Instead of changing to HAProxy on the instance, you create the script that installs and configures HAProxy, but you do this on your local machine. When you're happy, you commit this into your Debian package and install it on some cloud instances for testing.

When your HAProxy scripts all work fine, simply push your Debian package, along with the new script, up to S3. Next, just terminate your HAProxy instance and wait for a new one to replace it that will run the new scripts, installing and running HAProxy instead of whatever you had before.

To get extra code onto an instance, just pull it by using SVN, Git, or Wget; put it into place; and the work is done. So, if you have a huge repository of PDFs that never change or a massive archive database, your scripts can copy this down to instances so that each runs independently.

Anything you can do on the command line can be scripted, and packing up your common tasks into a Debian package means your best scripts and best config will be used on all of your instances.

Finally, remember that being scalable means being friction-free. The people I work with use Debian packages, because if the package installs, we've won half the battle: Our scripts are on the instance. It's a standard and convenient way of deploying, and it works every time.