Monitoring virtualization environments with Nagios and Icinga

Performance Check

Virtualization technology established itself in data centers many years ago and has its roots in host partitioning in the mainframe landscape. More than 35 years ago, IBM laid down the foundations with its mainframe logical partitions (LPARs). Today, data centers rely on virtualization, and many modern architecture concepts such as cloud computing would be unthinkable without it.

Because rolling out new virtual machines is easy, IT management best practices are sometimes ignored. For example, administrators forget to create Configuration Management Database (CMDB) entries or documentation, or they postpone monitoring indefinitely.

IT Service Must-Haves

Monitoring available resources and observing Service Level Agreements are some of the key competencies of IT operations that need to respond flexibly to new requirements. A variety of plugins exist for the free Nagios and Icinga monitoring systems to help administrators monitor today's virtualization solutions and identify long-term capacity bottlenecks.

In this article, I will introduce some solutions for monitoring virtualized environments and identifying changes.

What Should You Monitor?

The first question is: What makes a virtualized system so special? The fixed assignment of resources to a virtualized system seems to necessitate the same basic monitoring as a physical system. But, how do you define basic, and what do you really need to monitor at the operating system level?

To detect as well as to avoid failures, the hard disk typically offers the most potential. For this reason, you will want to monitor both the free space on disk and the I/O operations per second.

Nagios has a check_disk [1] plugin that identifies disk load and logs data for capacity-management tasks. Monitoring disk I/O is a useful extension to disk checking. After loading the check_diskio [2] plugin, you can easily keep track of disk I/O and define alert thresholds. With today's systems more reliant on storage than on CPU power, increased disk I/O is often identifiable days or weeks before other problems.

Although the Nagios Remote Plugin Executer (NRPE) is the standard in many environments, SSH is preferable, not only because of its firewall awareness and vendor updates, but above all because of its proven ability to handle keys and configurations in the Linux and Unix field.

Although the plugins I just mentioned are designed for Linux and Unix systems, administrators will typically need to monitor the system load and I/O performance on Windows systems, too. The typical approach here is to use NSClient++ agents [3] and the matching server plugins: check_nt [4] or check_nrpe. Windows counters are then queried:

check_nrpe -H $HOSTADDRESS$ -c CheckCounter -a "\\System\\File Data Operations/Sec" ShowAll MaxWarn=20000 MaxCrit=30000

Besides using check_disk, you can avoid failures by monitoring various processes. Standard plugins such as check_procs [5] and the CheckProcState module in NSClient++ [6] help you do so. Thus, you can monitor the maximum number of instances of a specific process on Windows and identify potential sources of failure in good time:

check_nrpe -H IP -p 5666 -c CheckProcState -a MaxCritCount=50 app.exe=started

Additionally, monitoring systems can, of course, monitor other services. The Nagios/Icinga portal [7] and various wikis [8] contain innumerable examples thanks to the huge popularity of Nagios and Icinga.

The type of monitoring you do will depend greatly on your virtualization solution.

Virtualization Choices

Although container-based technologies (e.g., OpenVZ, Linux VServer, or Solaris Zones) are closed run-time environments that do not launch a separate operating system kernel, system virtualization and paravirtualization (as supported by VMware, Xen, or KVM) only grant access to a pool of resources.

The host system handles the way it is used and controls the available resources independently of the virtualization environment. This setup gives rise to vastly different monitoring scenarios, with container-based solutions typically residing in homogeneous environments, whereas paravirtualization typically involves a variety of host systems.

Monitoring OpenVZ and Solaris Zones

OpenVZ can provide more containers thanks to a patched Linux kernel. For each container, OpenVZ creates a structure in the process area of the Linux system.

Although earlier systems provided a flat plan with areas like /proc/user_beancounters, /proc/user_beancounters_sub, and /proc/user_beancounters_debug, these are now located in a hierarchical structure in the current version.

The counters are listed by container UID and can be viewed easily with the use of filesystem commands. On the basis of the sample plugin in the OpenVZ wiki, Robert Nelson developed check_openvz [9].

By adding threshold values, you can check for certain limits being exceeded (e.g., kmemsize and numproc) and modify the configuration to reflect actual resource requirements. To monitor Solaris Zones in addition to the hosted operating system, you need the zoneadm command.

The check_zones tool [10] provides a wrapper plugin to facilitate calling the plugin and outputting the zone status, whereas prtstat -Z provides an interesting alternative (Listing 1).

Listing 1: prstat

# prstat -Z # PID USERNAME SIZE RSS STATE PRI NICE TIME CPU PROCESS/NLWP # 173 daemon 17M 11M sleep 59 0 3:18:42 0.2% rcapd/1 # 17676 apl 6916K 3468K cpu4 59 0 0:00:00 0.1% prstat/1 # ... # ZONEID NPROC SWAP RSS MEMORY TIME CPU ZONE $ # 0 48 470M 482M 1.5% 4:05:57 0.0% global $ # 3 85 2295M 2369M 7.2% 0:36:36 0.0% refapp1 $ # 6 74 13G 3273M 10% 16:51:18 0.0% refdb1 $ # Total: 207 processes, 709 lwps, load averages: 0.05, 0.06, 0.11$

The output from prstat -Z gives the administrator global information on CPU and memory usage of the individual virtual machines without having to access the zone itself. The two plugins [11] for CPU and memory are simple shell scripts but can also handle alert thresholds.

Xen and KVM

Although Xen and KVM use totally different concepts, they both rely on Libvirt. The Libvirt toolkit is sponsored by Red Hat and communicates with various virtualization technologies.

Besides Xen, KVM, and VirtualBox, Libvirt also supports OpenVZ, VMware ESX, and GSX. The nagios-virt [12] tool was written on the basis of this API and helps administrators configure host and service definitions for Libvirt systems. Although the latest update was some time ago, the functionality is still there.

The check_virsh [13] tool is another monitoring plugin based on Libvirt and the virsh command. The plugin output isn't plain English but is easy to modify.

For Xen, the check_xenvm [14] plugin is available, which relies on the xm list command to evaluate the Xen status and return formatted output. If you monitor a system during a live migration, this information is also output by the plugin.

VMware

VMware has been on the market for more than 10 years and is thus one of the veterans of virtualization. To show the various monitoring options, you must distinguish between the various product lines.

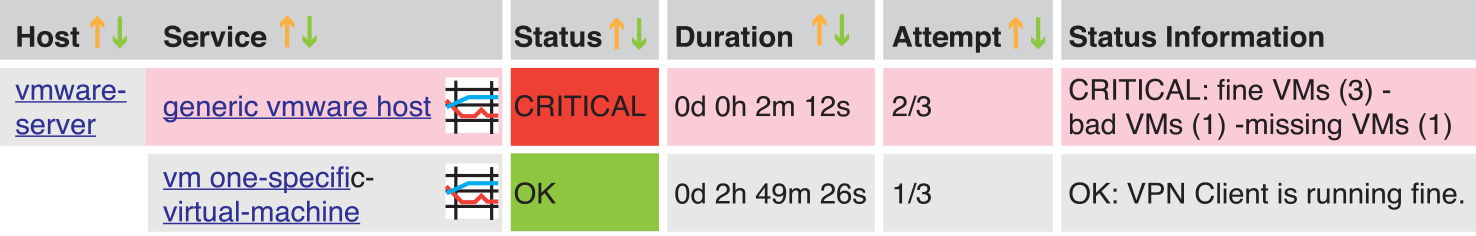

Whereas VMware Server (formerly VMware GSX) requires a separate host system based on Linux or Windows, VMware ESX Server uses its own Linux kernel with driver extensions and no longer relies on a separate host. Also, the vCenter product supports centralized administration of multiple ESX servers and live migration (vMotion). The two plugins with the largest feature scope are check_esx3 [15] and check_vmware3 [16].

Both plugins use the VMware Perl API to access VMware information and support versatile parameterization. The main difference between the two plugins is that check_vmware3 supports a heartbeat for more precise system status evaluation and also supports regular expressions for hostnames (Figure 1). Thus, you can target the virtual machines belonging to a specific customer or specific system groups for monitoring. If the VMware tools are installed on the guest system, the check_esx3 plugin will retrieve detailed performance data and measure performance via the host system, similar to the Solaris zone scenario. This approach removes the need to install a special agent on the guest.

Processing

Organizing the host and service objects in meaningful host and service groups is important for monitoring and intelligible reporting. Checking the availability of a virtual machine via the host system is no replacement for comprehensive monitoring of the virtualized system. For one thing, the visibility of the guest system depends on the network, which can lead to false results. Also, configuring service dependencies can be tricky.

Correlating the performance information for a host system is a really useful technique. Influencing factors in the case of overlapping resource assignments, such as memory ballooning or the use of VCPUs, can be identified, which gives you the option of migrating virtual machines to other host systems. Graphical processing of the plugin results using PNP4Nagios [17] or Netways Grapher [18] facilitates long-term analysis and puts you in a position to identify capacity bottlenecks at an early stage.

Conclusions

Users who deploy Nagios and Icinga benefit from what is probably one of the largest and most active open source communities in the monitoring world and can thus access a plethora of plugins and extensions. Xen, KVM, and VMware in particular support detailed querying options thanks to Libvirt or their own APIs. Additionally, queries are easily implemented by extending existing plugins.

Monitoring activities for the virtualization platform and virtualized systems ideally should complement each other because performance nearly always depends on the functionality of the physical system and not on the virtualization environment. Comprehensive monitoring of the virtualization platform in combination with other basic checks provides a clear view of dependencies and could also identify bottlenecks on the host system before they are identifiable on the guest system.