Can your web server be toppled with a single command?

Dangerous Test Tools

Picture this: You've carefully considered the hardware you need for your new web server. You've spent time meticulously tuning your database, and your colleagues have spent weeks developing your cutting-edge application – not to mention the weeks of work your top-dollar designers have tirelessly put in so they can break the mold and produce a ground-breaking website. You think your job's done, and you're even looking forward to a holiday. Your site goes live and receives all the coveted praise you'd hoped for. Your testy boss is even verging on looking happy for a change.

Then, disaster strikes. One afternoon, somebody in another country with too much time on their hands, using a perfectly legitimate and commonplace testing tool, brings your precious site to its knees with a simple command line of just a few characters, using a single broadband connection.

The threat of such an attack is very real. Even a well-designed, purpose-built, and high-capacity infrastructure can be crippled by a simple attack. That worried expression on your face needn't last for long, though, because a simple set of security rules will automatically mitigate such attacks. Surprisingly, these rules are not commonly deployed by all accounts.

Benchmarking

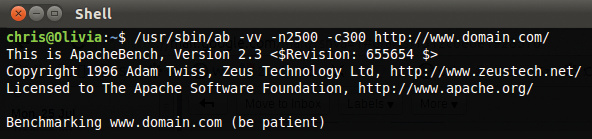

The attack tool in question is the well-intentioned Apache benchmarking tool ab [1]. Apache documentation states that ab "… is designed to give you an impression of how your current Apache installation performs. This especially shows you how many requests per second your Apache installation is capable of serving."

Although it sounds innocuous enough, the power that this little piece of software can wield is available to any miscreant capable of cutting and pasting a single command line. What's more, ab is usually already bundled with most Apache installations on Linux, so this tool is freely available to anyone who wants it. Also of concern is that you don't even need to be a superuser to run such a command.

Figure 1 shows the ab tool running on the command line and configured with the parameters to set 2,500 total requests and 300 concurrent connections – more than enough to cause serious headaches for any single web server. Anyone familiar with Apache to even a minor extent is likely aware of the plethora of configuration options and modules it supports; undoubtedly, the ability to construct such a finely tuned web server configuration is one of the reasons it is so popular. The mighty Apache benchmarking tool ab is no exception, and you can finely tune it to offer a very specific set of testing criteria, which I'll leave you to discover (for white hat penetration testing purposes, these tests will be on your own servers only, needless to say).

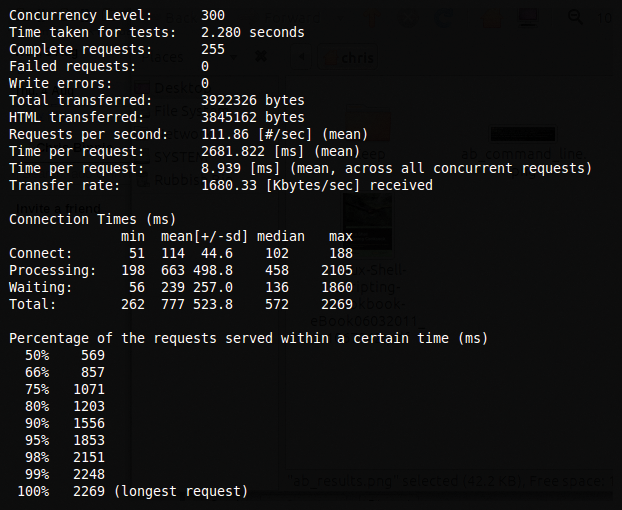

Abbreviated results of a test which was run on http://www.domain.com are shown in Figure 2. Notice that I stopped this test between two and three seconds, so it had no effect whatsoever on the test subject's website. The results would be slightly fuller if I had let it run.

The bottom of Figure 2 shows the Percentage of the requests served within a certain time (ms), which is by how much your web server is slowing down due to the attack. The lower section is running through the length of time, in milliseconds, that the longest responses took to complete as the concurrency of connections was increased.

HTTP Requests Overload

Rather than spending (sometimes, tens of) thousands of pounds, dollars, or Euros on a corporate DoS solution, you might want to try one highly effective workaround to successfully thwart some Apache attacks. This solution requires only a single Linux machine, running the ever trusty IPtables firewall.

If you have even basic IPtables knowledge, the ruleset in Listing 1 won't take too much effort to decipher. The only prerequisites that might trip you up (e.g., if you're running Gentoo and compiling your own kernel) is that the usually built-in-as-standard multiport and state modules must be installed and available to IPtables for this ruleset to work. Although I've seen a similar solution that uses the recent module, I prefer the granular tweaking this approach offers.

Listing 1: IPtables Ruleset

01 iptables -A INPUT -p tcp -m multiport --dport 80,443 -m state --state NEW -m limit --limit 100/minute --limit-burst 300 -j ACCEPT 02 iptables -A INPUT -p tcp -m multiport --dport 80,443 -m state --state NEW -m limit --limit 100/minute --limit-burst 300 -j LOG --log-level info --log-prefix NEW-CONNECTION-DROP: 03 iptables -A INPUT -p tcp -m multiport --dport 80,443 -m state --state RELATED,ESTABLISHED -m limit --limit 100/second --limit-burst 100 -j ACCEPT 04 iptables -A INPUT -p tcp -m multiport --dport 80,443 -m state --state RELATED,ESTABLISHED -m limit --limit 100/second --limit-burst 100 -j LOG --log-level info --log-prefix ESTABLISHED-CONNECTION-DROP: 05 iptables -A INPUT -p tcp -m multiport --dport 80,443 -j DROP

The commands in Listing 1 differentiate between an offender flooding ports 80 and 443 (HTTP and HTTPS) with both new connections and repeating established connections. This approach slows an attack more effectively. A more primitive approach might be to limit only new connections, but such a solution might be more suited to a SYN flood attack, in which you are purely limiting TCP/IP connections, most of which are of the new variety.

That approach won't work here because the poor, old Apache server actively tries to keep the connections to the visitor's browsers open so that it can consume less bandwidth and web server resources per transaction. As a result, you have to keep established connections in mind as well. With some testing, you can gather a better understanding and see in great detail how the ratio of new and established traffic is rate limited, as dmesg or Syslog fills with reports from the ruleset.

The fundamental purpose of this IPtables ruleset is to throttle any offenders accordingly but still keep your web server available to wanted visitor requests. In my tests, this worked well, although the laboratory web server was a little sticky when responding.

The first line has the parameter that mentions --limit 100/minute, which is relatively straightforward; simply, it means that if destination ports 80 or 443 are requested with more than 100 packets a minute, the packets are dropped.

The second switch on that first line --limit-burst isn't quite as straightforward as you might think. Essentially, 300 new packets are allowed through the first parameter before the limit of 100 new packets per minute is applied, effectively giving a bit of leeway for legitimate traffic before the rate limiting even thinks about kicking in its 100/minute rule.

When I was experimenting with the limit and limit-burst values, I was surprised at how little traffic was actually let through. Be warned that the limit values are strict and might be too punitive for your Apache configuration. If so, simply increase them globally within the ruleset to loosen them up. As with all things firewall based, I'd definitely recommend testing these rules on a desktop or a laboratory server before deploying them.

After you have locked down the allowed rates of inbound traffic for ports 80 and 443 with the rules in Listing 1, you can then usually open up outbound traffic. Please be aware that these examples are part of my comprehensive, site-specific IPtables script; you need to keep your own IPtables configurations specific to your needs. This small ruleset works if you have other settings in place but, for example, completely opening up ports 80 and 443 as below might not be what you're looking for.

Denial of Service

Another well-known web server attack that this IPtables solution unfortunately won't mitigate is the once massively prevalent Slowloris attack. When this nefarious piece of code first appeared on the Internet, it brought a frightening new proposition to systems administrators.

Along the same vein as the Apache ab attack, the massively destructive tool Slowloris (which, describes itself as "the low bandwidth, yet greedy and poisonous HTTP client") offers the attacker the means to topple a web server with one machine using only a very small amount of bandwidth.

This approach is unusual compared with other DoS attacks, which might consume the target machine's bandwidth resources completely (filling their connection to the Internet with unwanted traffic). The Slowloris quandary still appears to be wholly unsolved, but you'll be glad to know that at least some methods can mitigate its effects.

The first workaround, which won't work for a Distributed DoS attack (because such traffic originates from multiple IP addresses and not just one IP address), is to place a limit on the number of connections that a single IP address can use at any one time.

Apparently (and somewhat counterintuitively), you can also achieve some benefits by making sure your visitors don't use too little bandwidth for each connection. Think along the lines of many thousands of peppered attack packets being sent to your server, and you should get the idea. Strangely, low-bandwidth connections from your visitors might not be welcome in this scenario (usually bandwidth is one of your most precious and treasured finite resources).

Another way of limiting the effects of such an attack is to lower the amount of time the IP address of a visitor can stay connected to the web server. Imagine a partial connection replicated thousands (or hundreds of thousands of times), leaving resources hanging and unavailable on your Apache server. If they timeout quicker, clearly your resources will be available sooner.

You'll find much more information on Slowloris at the worryingly titled ha.ckers.org website [2].

Conclusion

Readers in the know are likely aware of the vast number and varied types of Denial of Service (DoS) and Distributed DoS attacks in the wild on the Internet. Among those attacks are several specifically designed just to knock over web servers, so the life-saving solution of a smart IPtables configuration is not Apache's silver bullet. However, most would agree that this approach is generic enough to prevent a variety of traffic flooding attacks.

Of course, you can apply these rate-limiting rules to any port number on your server, but proceed with caution and don't lockout remote access by accident if you decide to experiment with the ruleset.

What I enjoy the most about solutions such as this is their simplicity and desirable efficacy. In this case, the ruleset makes for a lighter load on your servers without requiring you to spend a small fortune – and you'll sleep better at night.