Managing virtual machines

Home Brew

Virtual machines are a perfect choice if, for example, you need to test a system upgrade – something that always entails a certain amount of risk. So, when I wanted to see whether a full update from CentOS 5 to 6 would work – a procedure the release notes advise against – a virtual environment seemed like the prudent decision.

The first step is to create a copy of the current system, which should be as up to date as possible. Two options for this physical-to-virtual migration are to use special tools from the virt-v2v package [1] or to create an image over the wire with dd, but these aren't likely to give you consistent results with a running system. A cleaner approach would be to use an image backup program for Linux, such as Clonezilla [2] or Partimage [3], which means shutting down the system and booting a Live system.

To test the update, which doesn't require 100% synchronization, I decided to copy the CentOS installation to the server acting as the host using rsync.

This method should give you a trouble-free and fast option for updating the data after the first complete copy. You can then create a disk image for the virtual system with qemu-img or with guestfish [4], which will also create an ext3 filesystem, if so desired [5]:

$ guestfish -N fs:ext3

When you call Guestfish with the new image,

$ guestfish -a <Image>

you are taken to a shell where you first need to enter run to toggle the system to a ready state. Then, entering list-filesystems shows you the available partitions, and the built-in mount command mounts them in Guestfish. If you launch Guestfish with the -i option, all of this is done automatically.

The Guestfish shell contains some built-in tools that let you copy data from the host to the guest system and vice versa (e.g., copy-in, copy-out, tar-in, and tar-out) [6]. Unfortunately, the performance of these tools leaves much to be desired; in fact, it can take a whole afternoon to transfer a few gigabytes to a guest system.

The far superior alternative is to mount the guest system on the host using a loopback and to copy the data directly on the host system. For this to happen, the guest system disk image must be available in a raw format. Other approaches involve mounting qcow2 images directly, but they use the network subsystem and are thus slower. Even with the potential detour of converting the qcow2 image to raw with qemu-img convert, you will still save a fair amount of time.

To mount the image in the partition, you first need to determine the offsets for the start of the file. A good tool for this is kpartx; you need to call it with the -l option to output the partitions. If you run the tool with the -a option, it creates the matching device mapper files:

$ kpartx -l ff-clone7.img loop0p1 : 0 20969472 /dev/loop0 2048 $ kpartx -a ff-clone7.img $ kpartx -l ff-clone7.img loop0p1 : 0 20969472 /dev/loop0 2048

The only device mapper file here is loop0p1; to mount the corresponding partition, enter:

$ mount /dev/mapper/loop0p1 /mnt/

You can now use standard tools such as tar, cp, or rsync to copy the files to the mounted virtual disk. When doing so, make sure you use the correct options to preserve file permissions and ownership (e.g., rsync -az). If needed (e.g., if you use SELinux), also remember the filesystem's extended attributes. Rsync uses the -X option for this, and tar understands --selinux and --xattrs. Later, you can umount the filesystem and run kpartx -d to delete the device mapper files.

Because all of this happens at the file level (e.g., instead of completely cloning the disk with dd), the virtual system still lacks a bootloader in the Master Boot Record (MBR).

Again, there are various approaches for putting this on the disk. For example, an existing virtual machine can be used to mount the new virtual disk, and you can chroot to the new system. Calling grub-install with the correct device name installs GRUB in the MBR on the new virtual disk. Guestfish now also offers a guestfs_grub_install command to handle this operation. In our lab, attempting to install GRUB led to

Error 2: unknown file or directory type

which was difficult to troubleshoot. It finally turned out that Guestfish (or the e2fsprogs version 1.40.5 and newer it was relying on) used an inode size of 256 when creating the ext3 filesystem, which is incompatible with older versions of GRUB.

Unfortunately, there is no option for changing the inode size of the existing filesystem; the only workable approach was to create a new filesystem with the older inode size of 128 bytes and copy all of the system data to it. The mkfs.ext* switch required for this is -I <inode size>.

Once you have solved these problems, you reap the reward of being able to boot the virtual clone of the physical machine. Normally, you can correct minor differences in the virtual machine at run time with rsync.

Clones

Fortunately, cloning an existing virtual machine is far easier. The Libvirt tools include the virt-clone program, which creates a full copy of a virtual machine. Unfortunately, you need to pause the source system with the current program version. The parameters you need are the original virtual machine for -o, the name of the new virtual machine for -n, and the name of the new disk image being created for -f. Alternatively, the virt-clone option -a chooses the names itself.

If the Libvirt tools mysteriously fail, the explanation may be that they can't find the KVM hypervisor, which might be running on another machine. To find the hypervisor, all of the programs offer a -c or --connect option, which is followed by the hypervisor's URI. For a local system, it looks something like this:

$ virsh -c qemu:///system list

To make this permanent in a shell session, it's a good idea to set the corresponding environmental variable:

$ export LIBVIRT_DEFAULT_URI=qemu:///system

Three commands at the terminal then pause the active virtual machine, create a clone, and continue operations on the original virtual machine:

$ virsh suspend oldvm $ virt-clone -o oldvm -n newvm -f /var/lib/libvirt/images/newvm.qcow $ virsh resume oldvm

By default, virt-clone assigns a random MAC for the network card in the new virtual machine. Although you can assign a MAC address manually with -m, this does nothing to alter the issue that occurs with some network scripts on the cloned machine using the MAC address of the original virtual machine; this is the case on Red Hat Enterprise Linux and Fedora, for example, with the /etc/sysconfig/network-scripts/ifcfg-eth0 and /etc/udev/rules.d/70-persistent-net.rules files.

You could now boot the cloned virtual machine and then use SSH to edit the files, but Guestfish gives you a more elegant approach to editing files, after mounting the filesystem on a guest system, with the built-in edit command. You can automate the process using the virt-edit script included with the package, which also supports string replacement thanks to the -e option.

To take the MAC addresses for the old and new virtual machines from the respective XML configuration files and replace them in a single step, enter:

$ virt-edit newvm /etc/sysconfig/network-scripts/ifcfg-eth0-e "s/<old-MAC-adr>/<new-MAC-adr>/"

You need a similar approach with the Udev rules mentioned earlier. In this way, you can also replace the hostname so that it matches the name assigned during cloning. On a Red Hat system, you would have to modify the /etc/sysconfig/network file.

For more or less automatic cloning of virtual machines, you could keep note of the numbers assigned in a small text file or enter

$ virsh list --all

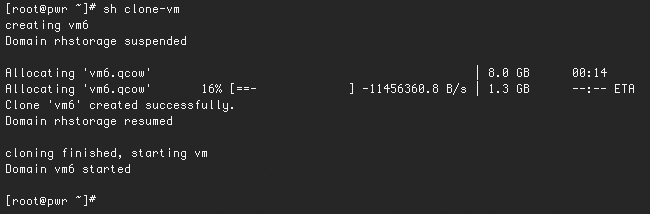

to list all the virtual machines and then increment a counter. An example of a script that manages virtual machines in this way is shown in Listing 1. Figure 1 shows the script results.

Listing 1: clone-vm

01 #!/bin/sh

02

03 export LIBVIRT_DEFAULT_URI=qemu:///system

04

05 lastid=$(tail -1 vm-list.txt)

06 newid=$(($lastid+1))

07 vmname=vm$newid

08 echo "creating" $vmname

09

10 virsh suspend rhstorage

11 virt-clone -o rhstorage -n $vmname -f /var/lib/libvirt/images/$vmname.qcow

12 virsh resume rhstorage

13

14 oldmac="52:54:00:B4:DF:EB"

15 newmac=$(virsh dumpxml $vmname | grep "mac address" | awk -F"'" '{print $2}')

16

17 virt-edit $vmname /etc/sysconfig/network-scripts/ifcfg-eth0 -e "s/$oldmac/$newmac/"

18 virt-edit $vmname /etc/udev/rules.d/70-persistent-net.rules -e "s/$oldmac/$newmac/"

19 virt-edit $vmname /etc/sysconfig/network -e "s/vm1/$vmname/"

20

21 echo $newid >> vm-list.txt

22

23 echo "cloning finished, starting vm"

24 virsh start $vmname

Again, multiple approaches support password-less logins via SSH. If the virtual system is accessible over the network, you can use ssh-copy-id to copy the key to the corresponding account on the virtual machine. Alternatively, you might want to add the required public key to .ssh/authorized_keys and copy the .ssh to the virtual machine, which you have not yet booted, with virt-copy-in.

DNS

The last task for a usable virtual environment is to make sure name resources works; after all, working only with IP addresses can be tiresome. You could resort to an /etc/hosts file, but if you want to interconnect the virtual machines, you need either to copy the hosts file between them or to configure a DNS server. The problem is aggravated if a DHCP server that you don't manage yourself assigns the IP addresses to the guests. It would be easy to tell the DHCP server to assign the IP addresses and bind them to MAC addresses, but this doesn't solve your DNS update problems.

The best approach for a clean solution is a DNS server that supports dynamic updates, such as BIND [7], which is available on any Linux system. The key setup here is tricky and involves a number of pitfalls. Alternatively, you can allow dynamic updates across the board for the 127.0.0.1 address.

Of course, it would be just as easy to script changes to files on the host system, assuming you have no password for the root account. This would let you modify the BIND configuration directly and send the name server a reload signal – you should not use this approach outside of a test environment for security reasons.

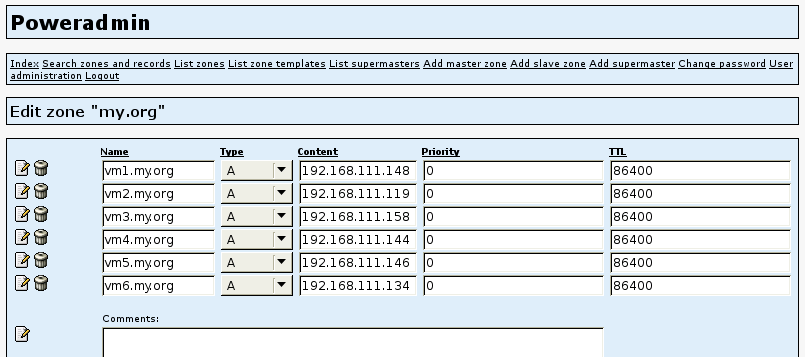

The approach taken here for the test installation was slightly more moderate: The host system runs the PowerDNS server [8], which stores its configuration in a database. This means you don't need root privileges on the host system for simple updates – just write access to the PowerDNS database.

On a Fedora system, you can install PowerDNS with the pdns and pdns-backend-mysql packages. Alternatives to MySQL are the pdns-sqlite and pdns-postgresql back ends. For the configuration, you need to enter the database schema manually from the documentation. If you prefer a front end for the configuration, you can easily install the web-based Poweradmin [9].

Although it might sound like a good idea to handle the DNS update independently of the guest, unfortunately, no approach to ascertaining IP addresses assigned by DHCP is reliable. One popular approach is to read the ARP cache on the host, but this only works if the guest has accessed the network at least once besides using DHCP. To ensure this is true, you might create a startup script containing a couple of ping packets.

If you want the guest system to initiate the DNS update instead, the best idea is to add the call to a startup script, which should reside in /etc/rc.local for simplicity's sake. Ascertaining the hostnames set on the guest systems is easy with the hostname command. Although you can read the IP address with ip addr show dev eth0 or ifconfig eth0, extracting it from the mess of data requires some advance text processing. The calls to the update script on the guest system look similar to Listing 2.

Listing 2: rc.local

01 #!/bin/sh

02 dnsserver=192.168.111.20

03 hostname=`hostname`

04 ip=`LANG=C /sbin/ifconfig eth0 | grep "inet addr" | awk -F: '{print $2}' | awk '{print $1}'`

05 ssh root@$dnsserver ./update-dns $hostname $ip

Now you just need to write a script on the host side to call the guest and update the PowerDNS database. Of course, you need to know the table structure for this, and you need to ensure that the entry already exists. The remainder is easily scripted in Perl, for example, as Listing 3 shows. The domain name my.org is hard coded here, but you could also pass it in, although this would mean modifying the database calls. If everything works, you can now admire the results of the dynamic updates on the Poweradmin front end (Figure 2).

Listing 3: update-dns

01 #!/usr/bin/perl

02

03 use DBI;

04

05 $db_user = "powerdns";

06 $db_passwd = "powerpass";

07

08 $hostname = $ARGV[0] . ".my.org";

09 $ip = $ARGV[1];

10 $time = `date +%s`;

11

12 $dbh = DBI->connect('DBI:mysql:powerdns', $db_user, $db_passwd

13 ) || die "Could not connect to database: $DBI::errstr";

14 $sql = qq/SELECT * FROM records WHERE name = '$hostname'/;

15 $sth = $dbh->prepare($sql);

16 $sth->execute();

17

18 if ($sth->rows == 0) {

19 $sql = qq/INSERT INTO records (name, content, type, ttl, change_date, domain_id, prio)

20 VALUES ('$hostname', '$ip', 'A', 86400, $time, 1, 0)/;

21 } else {

22 $sql = qq/UPDATE records SET content = '$ip' WHERE name = '$hostname'/;

23 }

24 $sth->finish();

25 $dbh->do($sql) or warn $dbh->errstr;

26 $dbh->disconnect();

To be able to log in with the hostname, all of the systems involved, the host and guests alike, need to use the self-configuring name server. The /etc/resolv.conf required for this can be installed on the guest systems according to the methods described earlier. The difficulty here is that the file is automatically overwritten when you boot with DHCP. Preventing this is more difficult than you might think because the suggested setting of PEERDNS=no in /etc/sysconfig/network-scripts/ifcfg-eth0 doesn't work in some cases. Alternatively, some blogs maintain that you can overwrite the two functions responsible for this in /etc/dhcp/dhclient-enter-hooks:

make_resolv_conf(){

exit 0

}

change_resolv_conf(){

exit 0

}

If this method fails too, all you can do is edit the DHCP client script /sbin/dhclient-script directly.

Conclusions

Many improvements could be made to the method that I have described here, starting with name management for the virtual machines, storage management, and name resolution. However, in this article I have shown how you can clone and configure virtual machines with very little effort using the Libvirt tools.

Guestfish and other projects are still working hard on their software and have many good ideas that only a lack of time prevents from being implemented.