Open source data center virtualization with OpenNebula

Cloud Control

Everybody is talking about cloud these days, and everybody seems convinced that they will not survive the next two years without a cloud solution. At the same time, administrators in many enterprises haven't even taken the first steps toward virtualization. Although virtualization and cloud computing work hand in hand in many people's opinions, the underlying strategic approach is not actually mandatory. If a major service provider were to install a bare metal system on every machine it bought, this wouldn't initially contradict the idea of accessing virtual services as the need arises over a network.

The requirements in terms of dynamic change and availability, however, soon reach their limits with this kind of solution. Besides the rather abstract IaaS services, which you can purchase, increasing administrative overhead is the greatest motivator for searching for a central administrative entity and the basic services that it provides.

Overview

Many of the frameworks available on the market today have been in development for years, and some excellent solutions have been produced. The OpenNebula framework [1] that I will look at in this article is probably the most popular framework for data center virtualization – along with Eucalyptus and OpenStack, or the Nova computing stack to be more precise.

The most important difference between OpenNebula and the other frameworks that I mentioned is the that OpenNebula sees itself more as a toolkit than as an all-in-one solution, and this is precisely what makes using this software framework so attractive. In the heterogeneous legacy data center field, doing a complete rebuild and having an empty site as the basis for the solutions that you deploy is virtually impossible.

Storage and virtualization concepts are often already established, and the use of them is second nature. What is missing is all-encompassing controls and administration of the available components in a private cloud solution. It is not until later, perhaps, that the desire for external server resources will arise to offset peaks and hand them over to service providers like Amazon in typical hybrid cloud style.

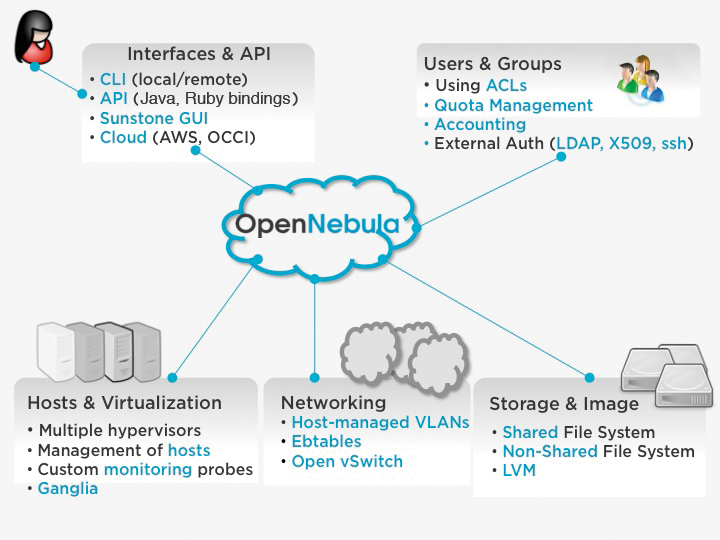

OpenNebula was founded in 2005 as a research project and has since been sponsored by the European Union. To provide resources, it accesses various subsystems, such as the virtualization stack, networking, storage, hosts, or users and groups, and connects the components via various command-line interfaces and a web interface named Sunstone.

Components

The host and VM components can be controlled independently of the deployed subsystem and allow transparent control of Xen, KVM, or VMware systems. Mixed operations with different hypervisors are also supported, and OpenNebula provides the available core components as interfaces. This transparent connection (Figure 1) of different components – and thus its excellent integration capability – is OpenNebula's strength.

Depending on the components used, your installation mileage may vary, which means a standard installation guide is not very useful. The comprehensive user documentation comprises various implementation examples with various storage and virtualization platforms. The basic installation comprises four components:

- Front end

- Hosts

- Image repository and storage

- Networking

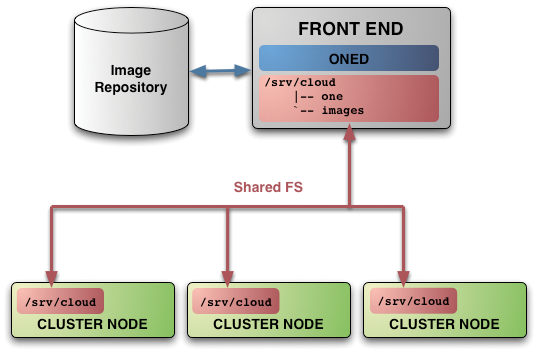

The front end with the management daemon (oned) comprises the APIs and the web interface (Sunstone); this is the control unit of the cloud installation. You don't need to install any specific software on the clients, with the exception of Ruby; however, SSH access must be possible on all the hosts involved to be able to retrieve status data or transfer images later on. Various options exist for setting up the image repository that relies on shared or non-shared filesystems.

Choosing the right storage infrastructure is probably the most significant decision at this point because changing this retroactively involves substantial overhead. Even if a scenario without a shared filesystem is conceivable, it will mean doing without various features, such as live migration, and you will need to redeploy the image if a host fails. Figure 2 shows this setup using a central NFS volume; this allows the environment to be set up quickly, but at the cost of data transfer rate restrictions caused by the protocol overhead.

The network connection is established using bridges that ideally should be accessible by the same name on all hosts. It makes sense to pay attention to logical naming conventions from the outset to avoid embarrassment when migrating a virtual machine after a host failure.

The components can be installed [2] using the distribution package for CentOS or openSUSE or from the source code [3]. The project website provides a very exhaustive how-to. After installing the required packages and creating the OpenNebula user account, you still need to generate an SSH key and distribute the key before you are done. If everything is working as intended, you should be able to start OpenNebula with:

# one start

And, you should be able to access the daemon using the CLI without any trouble.

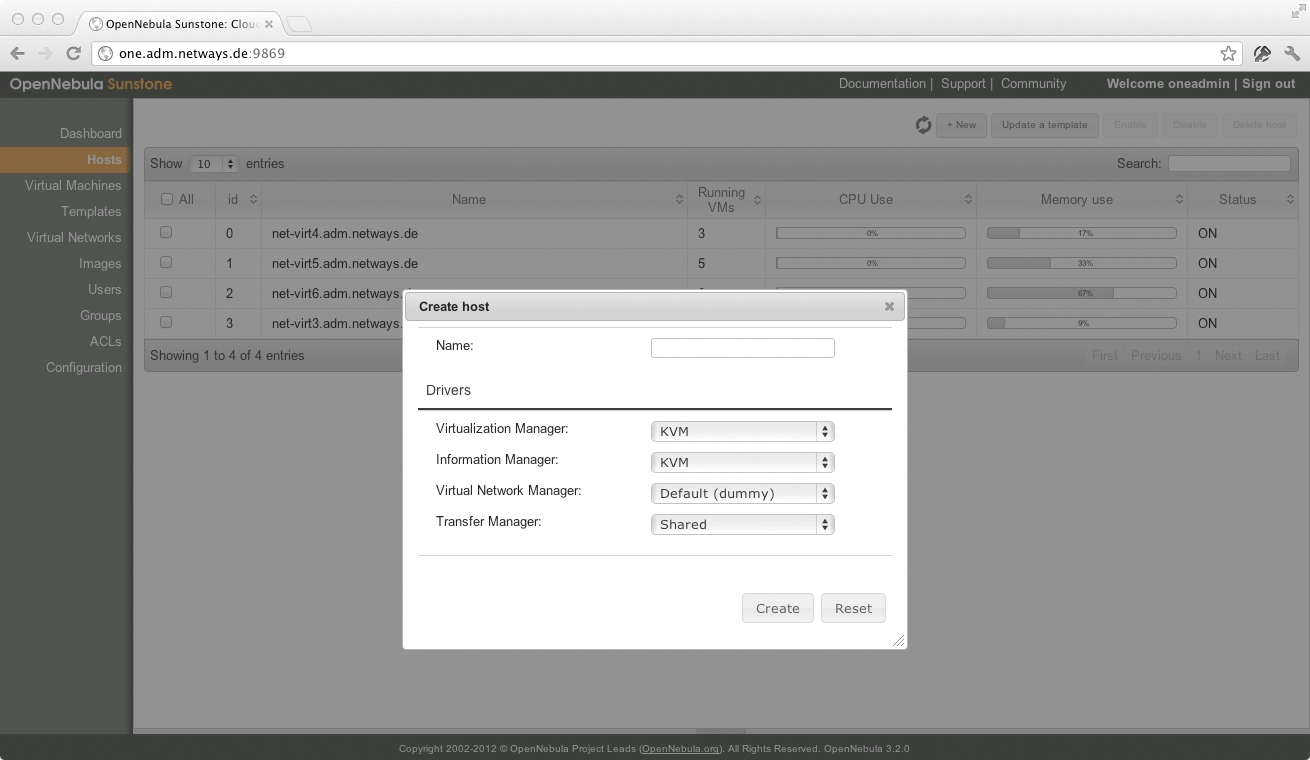

In the next step, you can add hypervisors to the system. Currently, Xen, KVM, and VMware are supported. Future support for Hyper-V was announced in the scope of cooperation between OpenNebula and Microsoft [4] and is expected to be available in the next version. Depending on the hypervisor you use, you might need to extend the configuration in the central configuration file (/etc/one/oned.conf) to ensure correct addressing of the host system. In KVM's case, communications are handled by libvirt, which acts as an interface and handles administrative and monitoring functions.

Even though OpenNebula has a neat web console and a self-service portal, you can use the command line to control all of its components (Table 1). Most commands work with host and virtual machine IDs to ensure unique identification and control. This makes connecting OpenNebula to a CMDB for automated virtual machine generation on the administration system child's play.

Tabelle 1: Important Command-Line Utilities

|

Command |

Function |

|---|---|

|

|

Controls virtual machines |

|

|

Controls host systems |

|

|

Virtual network management |

Puppet, Chef, or CFEngine can be served up just as easily to support programmatic control of the cloud environment. You will not miss any features, although it might take some time to become familiar with the approach and function of the individual subsystems. OpenNebula also supports hybrid operation of the various host systems or migration of resources to other private zones or public cloud providers and use of a single administrative console.

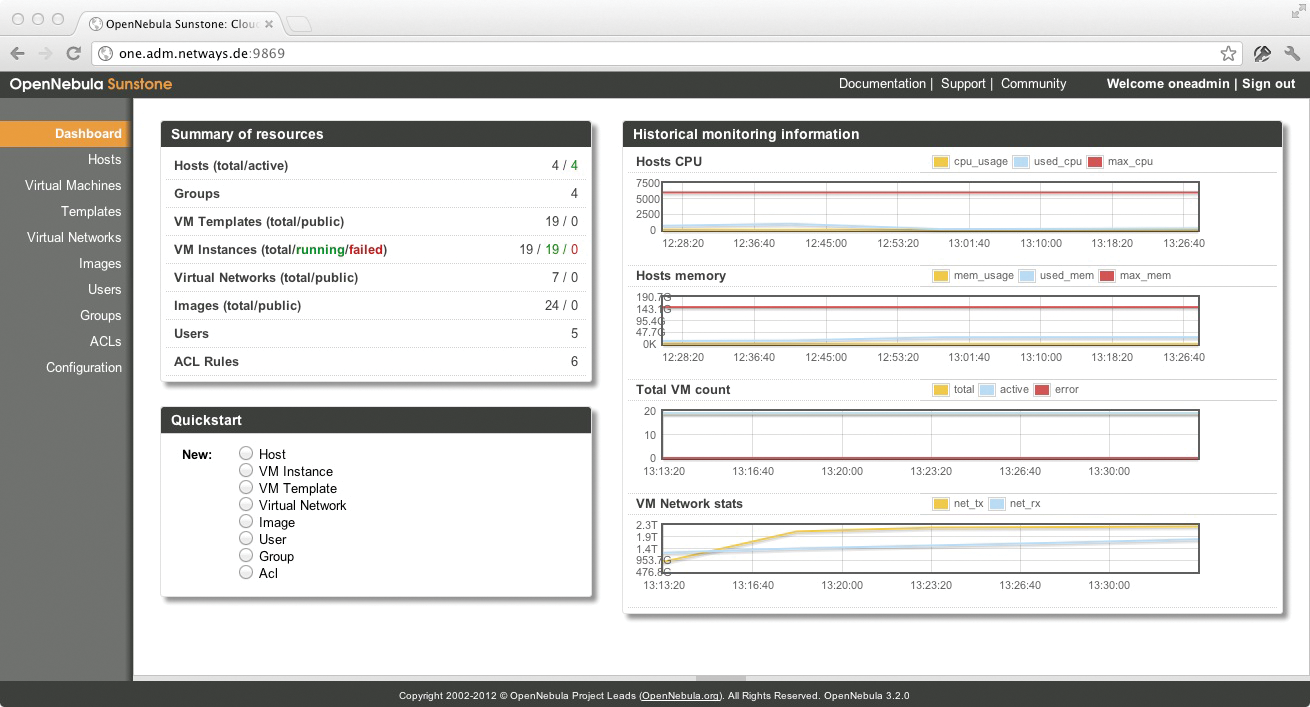

If you prefer a graphical control tool, Sunstone (Figures 3 and 4) provides a comprehensive web interface with multiuser support. Integration with a central directory service is also planned for the next version and will thus facilitate management of user and customer groups.

Conclusion

OpenNebula is a powerful management and provisioning platform for virtualized data center operation. Familiarizing yourself with the stack and the subsystems used here makes the cloud more tangible, and various components that have been around for years take on a new aspect in OpenNebula. Cloud computing doesn't necessarily mean outsourcing all of your services into the cloud; it also offers enormous potential for a company to respond flexibly to changing requirements and make optimum use of existing resources, while adding visibility.

If you are interested in learning more about OpenNebula, view the slides [5] used by Constantino Vázquez Blanco, a member of the OpenNebula team, in his talk during a workshop at the Open Source Data Center (OSDC) Conference [6], in which he explained the platform and shed light on its structure and function.