OpenStack: What's new in Grizzly?

Strong as a Bear

Apart from Ubuntu, no other project holds so true to a fixed release cycle as OpenStack. In parallel with Canonical, the developers launch each new version in April and October. This April was no different: Recently, OpenStack 2013.1, known as Grizzly, became available for downloading. As with the previous releases, much has changed this time around. For administrators of existing cloud deployments, the question arises as to whether an upgrade is worthwhile and whether it is easy to handle. So far, OpenStack has not exactly crowned itself with glory when it comes to updates.

Up to now, the only reasonable advice to OpenStack admins was: Do not try to update one OpenStack version to another. The first tagged with the "Enterprise Ready" label, OpenStack Essex, became available in April 2012. At that time, the developers promised to pay more attention to API compatibility between old and new versions in the future – with very modest success: Updating from Essex to its successor Folsom was complicated to impossible.

API Stability

Between Folsom and Grizzly, the developers seem to have done their homework: Instead of changing existing APIs, they added additional APIs and gave these higher version numbers.

This approach becomes clear in the case of Keystone, the authentication component: In addition to the API v2 which already existed in Folsom, Keystone now has an API 3.0 that works in parallel with the old API so that existing installations do not require reconfiguration.

The developers promise that the old API will remain at least until the next release, that is, the I-release (as with Ubuntu, all OpenStack releases are given code names that follow the alphabet, e.g., the successor of Grizzly is Havana).

That said, functions of the 3.0 API cannot be harnessed from within the 2.0 API. In a test of the previous version, migrating a Folsom Keystone to a Grizzly Keystone worked well, although the user base I migrated was fairly small. All told, the update seemed to be reliable, although admins would do well to test the migration under laboratory conditions before converting a production system.

New Components

Grizzly thus offers some update potential, but is the update worthwhile? The answer to this question might lie in the two new additions to the OpenStack core components family: Ceilometer and Heat.

Ceilometer is Open Stack's eagerly awaited statistics component. However, Ceilometer is not just designed to collect statistical data in OpenStack and generate colorful charts. Instead, the developers see their creation in the role of a billing system. Ceilometer then takes the place of the usage statistics already contained in the Nova computing component, which were very rudimentary.

The highlight of Ceilometer is that it is said to be capable of making its data available to any number of users. Theoretically, it can thus be used as a data source for an industrial billing system and as a source for cloud monitoring. One client (or in Ceilometer-speak, "consumer") is BillingStack, which is a separate billing system for OpenStack that allows billing of OpenStack services on the basis of user consumption. The Ceilometer site does not list any other consumers to date, but the environment only became part of Open Stack in Grizzly as an incubated project. You can look forward to many more consumers soon.

OpenStack welcomes another newcomer to Grizzly: Heat has also made the grade as an incubated project. Heat refers to itself as an orchestration suite, although it does not enter into direct competition with other solutions such as Puppet or Chef. Heat is strictly specific to OpenStack: Using a single file – a template – Heat allows users to build virtual machines from scratch and connect them with one another. A template could be, for example, "Web Server Cluster," that would automatically create a web server farm.

Heat includes features of practical use, such as high availability and basic monitoring. Moreover, OpenStack Heat supports the Amazon AWS Cloud Formation format to allow inter-VM orchestration in its own cloud. Ultimately, Heat is primarily a tool that will help admins with automation, which means it fits in very well with OpenStack, which names total automation as its ultimate principle.

New Features in Keystone

The established components of OpenStack impress in Grizzly with a number of great new features. Keystone, as a central ID component, leads the way with support for Active Directory, which, after LDAP, is the second most widely used method for managing your users centrally.

The aforementioned API also has some new features. For example, tenants are now known as projects. Anyone who knows the history of OpenStack will be experiencing déjà vu here, because Nova had projects that were then renamed to tenants. Security-conscious people will be happy for the opportunity to use multiphase authentication in Keystone.

Glance with Many Back Ends

The developers of the Glance image service have listened to the wailing of their users and implemented a feature that many urgently wanted: Glance can now use multiple, parallel image back ends. To date, admins had to decide whether they wanted to store images in Swift, in Ceph, or locally on Glance's host disk. In Grizzly, you have the option of combining different image stores.

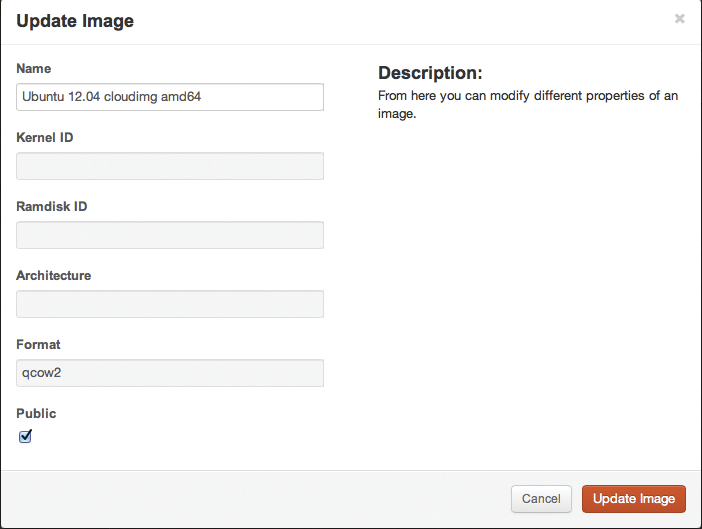

Another new feature in Grizzly is the ability to share your own images with others; user images were previously available only to the same user, which often led to duplication. Additionally, admins can now store many more details for images in the Glance database; including the ability to output the exact version of an operating system. Until now it was only possible to choose a name.

And, admins now can set flags that state that the image is, for example, a Windows 7 or a Windows 8, as well as define the image format properties. The primary objective is to achieve more clarity in large OpenStack installations with a corresponding number of images. All the new fields are indexed and therefore searchable – if you want a specific OS in the cloud, you will reach your destination much faster.

OpenStack Compute (Nova) is perhaps the most important OpenStack core component. And, in Grizzly, the OpenStack developers came up with many changes. During configuration, you will initially notice that something is missing: since nova-volume was virtually kicked out of Nova in Folsom, the developers have now eradicated the final remnants. The same is true for most functions formerly available in nova-manage because, besides nova, the CLI client for Nova, nova-manage provided additional details. No more: In the long term, nova-manage will only be necessary to create tables in the MySQL database for Nova during the first phase of the installation. All other functions are being migrated to nova.

Return of the Cells

If you have been following OpenStack for a long time, will meet an old friend in a new guise in Nova Grizzly: Cells are back. In previous OpenStack versions, cells allowed you to group OpenStack computers and then deploy these groups in a targeted manner (e.g., specifying that different VMs belonging to a customer had to run in different cells, which then possibly resided in two separate data centers).

In OpenStack Essex, the cell feature fell out of favor, and the developers removed the function – not because it did not prove to be useful, but because they did not like the implementation. Now cells are back as Nova Compute Cells, allowing precise control of both geographically distributed systems and the option of separating host and cell scheduling to give preferential treatment to individual tenants in OpenStack, if necessary.

Another new feature in Grizzly looks trivial but is spectacular – the libvirt back end of OpenStack now understands SPICE, the protocol for connecting a client to the graphical desktop of a virtualized system. Basically, it is a very similar solution to the VNC protocol, but SPICE offer much better performance. It is finally possible in Grizzly to connect to the desktops of Windows VMs in a technically reliable and easy way. OpenStack thus opens up a whole new target group because SPICE allows you to use OpenStack as a typical solution for virtualized desktops (VDIs). With VNC, that was unthinkable.

If you like VMware, you will be pleased to hear that Nova now has a computing module for VMware in Grizzly. This makes it possible to use hosts with VMware as OpenStack computing nodes. If you prefer to rely on virtualization with VMware rather than KVM or Xen, Grizzly finally lets you do so.

Nova and High Availability

Up to now, support for high availability (HA) functions in Nova has been limited, but the developers promised improvements in Grizzly, and they delivered – somewhat. Unfortunately, the Evacuate feature stops halfway to true HA functionality.

Evacuate is designed to let administrators restart VMs on other hosts if they previously ran on a computing node that went down. However, the implementation fails because – although Evacuate provides exactly this function – OpenStack still has no reliable way of determining whether a computing node has failed or not. That is, failed VMs might indeed be much easier to start on other nodes now, but the admin still needs to lend a hand, which unfortunately is far removed from true high availability. However, the developers mention the HA scenario in the specification of Evacuate, so hope for an implementation still exists. Perhaps this will happen in Havana.

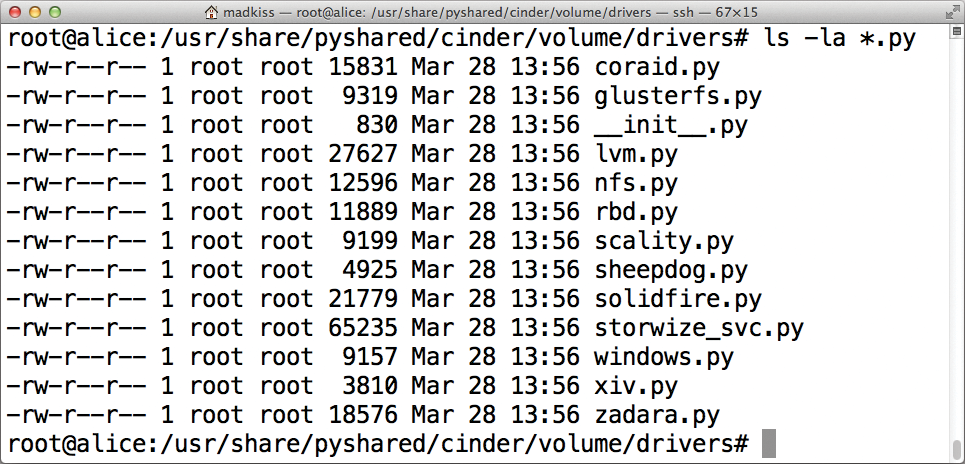

Flood of Drivers in Cinder

Thanks to its many new drivers, the Cinder block storage component appears in a positive light. For example, the software now supports direct connections to Fibre Channel storage and comes with native support for HP 3PAR and EMC storage (Figure 1).

New features have also been added: Just like Glance, Cinder can now handle multiple back ends at the same time. This feature is really useful, especially if you want to use both an existing SAN, and a new Ceph cluster simultaneously as back ends. Both backups and image cloning are now among the features that Cinder includes out of the box.

HA in Quantum

The network service Quantum impresses in Grizzly because it comes with a new component called the Quantum Scheduler. The scheduler allows you to have many different instances of the various Quantum services running simultaneously on multiple hosts. More specifically, it is possible to use several distributed DHCP and L3 agents at the same time. Scale out based on this principle removes the need for a single network node, which could be a bottleneck.

Moreover, the new feature for high availability is useful because the failure of the host with the network service no longer automatically affects all the VMs. Also, several alternative Quantum servers can take over the function of the failed server seamlessly.

Safety officers will be happy to hear that OpenStack now natively supports iptables at the Open vSwitch level. Security groups in Nova are officially classified as "abandoned," so Quantum is the sole ruler over network security.

The function that Quantum developers have really been beating the drum for is Load Balancing as a Service (LBaaS). Although this sounds like it came straight from the marketing department, in reality, it takes a very complex function directly to the heart of Quantum. Quantum can be used as a load balancer with LBaaS for different services, without having to set up a separate virtual server with the appropriate functionality. Quantum itself becomes the hardware load balancer and directly takes care of distributing packets across the various instances of a cloud in the network stack.

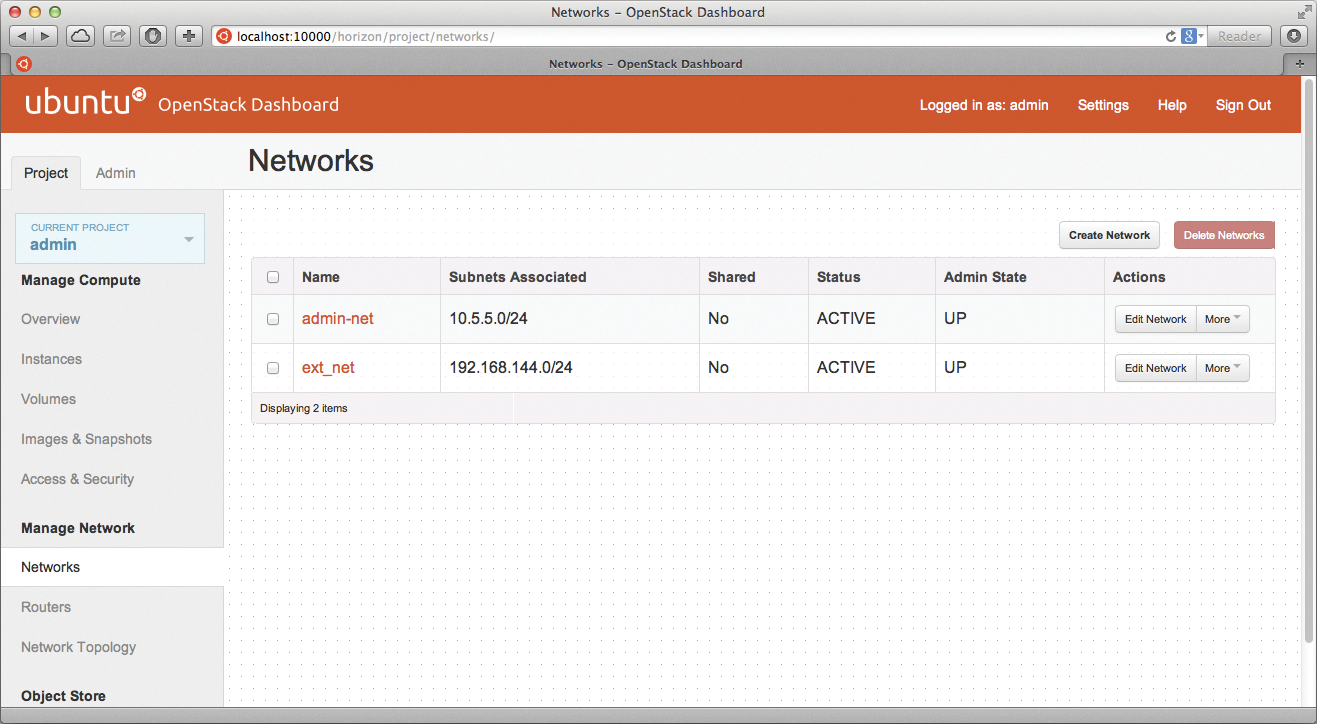

The Dashboard: A Lot of Quantum

The component with which most end users have the most contact is the dashboard (e.g., Horizon; Figure 2). For Horizon, the changes in Grizzly are all about Quantum because that was one of the largest construction sites in Essex after the release in October 2012.

Although the Quantum team did indeed complete an initial version of Quantum for Essex as promised, the dashboard knew nothing about it and was still designed to configure the network with Nova. Although the Nova developers created a partially functional workaround, in which they patched Nova so that it sent the appropriate commands to Quantum in Quantum setups (instead of making the network settings itself), in production environments, this nasty hack has proven to be very unreliable. For example, a patch is necessary for Folsom in Essex if the web interface is to understand floating IPs.

Grizzly puts an end to this hack because Horizon has been given a completely new back end and now understands Quantum perfectly. Thus, it is not surprising that the LBaaS function appears in the dashboard next to the basic functions. Even small corrections have been incorporated; for example, it is now possible to migrate VMs via the dashboard (Figure 3) and to display a graphical overview of the network topology.

Conclusions

OpenStack is maturing at a staggering pace. This is particularly evident because, although Grizzly still introduces many new and very useful features to OpenStack, they tend to be smaller and meet the needs of increasingly smaller groups. Seek gigantic innovations like Quantum in Folsom in vain; instead there have been many medium-sized innovations and much attention to detail.

One great achievement is undoubtedly that Cinder and Quantum now come with schedulers that allow seamless scale out of services and thus eliminate potential bottlenecks. Active Directory support in Keystone will be a killer feature for many businesses and fuel potential OpenStack ambitions.

Moreover, many small but useful enhancements and bugfixes make this version of OpenStack very attractive. Cells in Nova, multiple-factor authentication in Keystone, and different back ends in Glance are not groundbreaking, but still very useful.

If you are just starting to look into OpenStack, make sure you do so in the Grizzly release. If you don't need any of the new features, you should nevertheless consider an update because the janitorial work has been done very well in OpenStack Grizzly. At the end of the day, my overall verdict is: Thumbs up!