Integrating OCS information into monitoring with OpenNMS

Automatic Transfer

OCS Inventory NG (Next Generation) [1] is an inventory system that gathers information about hardware and software running on your network. OCS uses an SNMP-based discovery mechanism to inventory network components. The agent collects information about:

- logical drives and partitions,

- operating systems running on network hosts,

- installed programs, and

- connected monitors.

It also stores the collected data in a MySQL database. You can configure OCS to organize and categorize the inventory data for a quick overview of network information.

OCS was developed by a French team using Perl and PHP and is released under the GPLv2. The project is supported by FactorFX, which offers commercial support and training.

One really interesting feature of OCS is its support for SOAP web messaging services, which makes the OCS inventory data available to other applications and thus leads to some very useful automation scenarios. In this article, we describe how to channel OCS inventory data to the OpenNMS network monitoring tool. Combining OCS with OpenNMS means you can automatically monitor the devices in the OCS inventory and even send failure notifications if a device on the network is misbehaving.

OCS

OCS uses a software agent that is available for the Linux, Unix, Windows, OS X, and Android operating systems. You can download the OCS server, agents, plugins, and tools from the project website [2]. The OCS project documentation [3] and forum [4] will help you get started installing and configuring OCS.

OpenNMS

The OpenNMS network management platform [5] was predominantly developed in Java and is released under the GPLv3+. In the project, there is no distinction between the free community and the proprietary enterprise version. OpenNMS Group Inc. provides commercial training, support, and development.

The application focuses on error and performance monitoring. As a platform, an extensive provisioning system is available that can integrate various external data sources. In OpenNMS, these tasks are handled by the provisiond process. For example, DNS zones and virtual machines from VMware vCenter can be automatically monitored and synchronized.

HTTP is used to transfer the XML format data to OpenNMS. You have several ways of assigning monitors, for example, for HTTP, SMTP, IMAP, and JDBC. Either service detectors automatically discover these services or they can be assigned manually. A mixture of the two variants is also possible. Policies can be used to categorize systems automatically or to specify the network interfaces from which to capture performance data. To support use in larger environments, OpenNMS was built on an event-based architecture.

Creating Connections

If you use OCS, you have access to extensive information on server systems in a central inventory, which would also be very useful for monitoring. Models or serial numbers identified by the OCS agents can deliver helpful information in a message to the OpenNMS Administrator in the event of a failure. OCS also frequently covers systems that are not relevant for monitoring, such as workstations or systems that are still in the development environment.

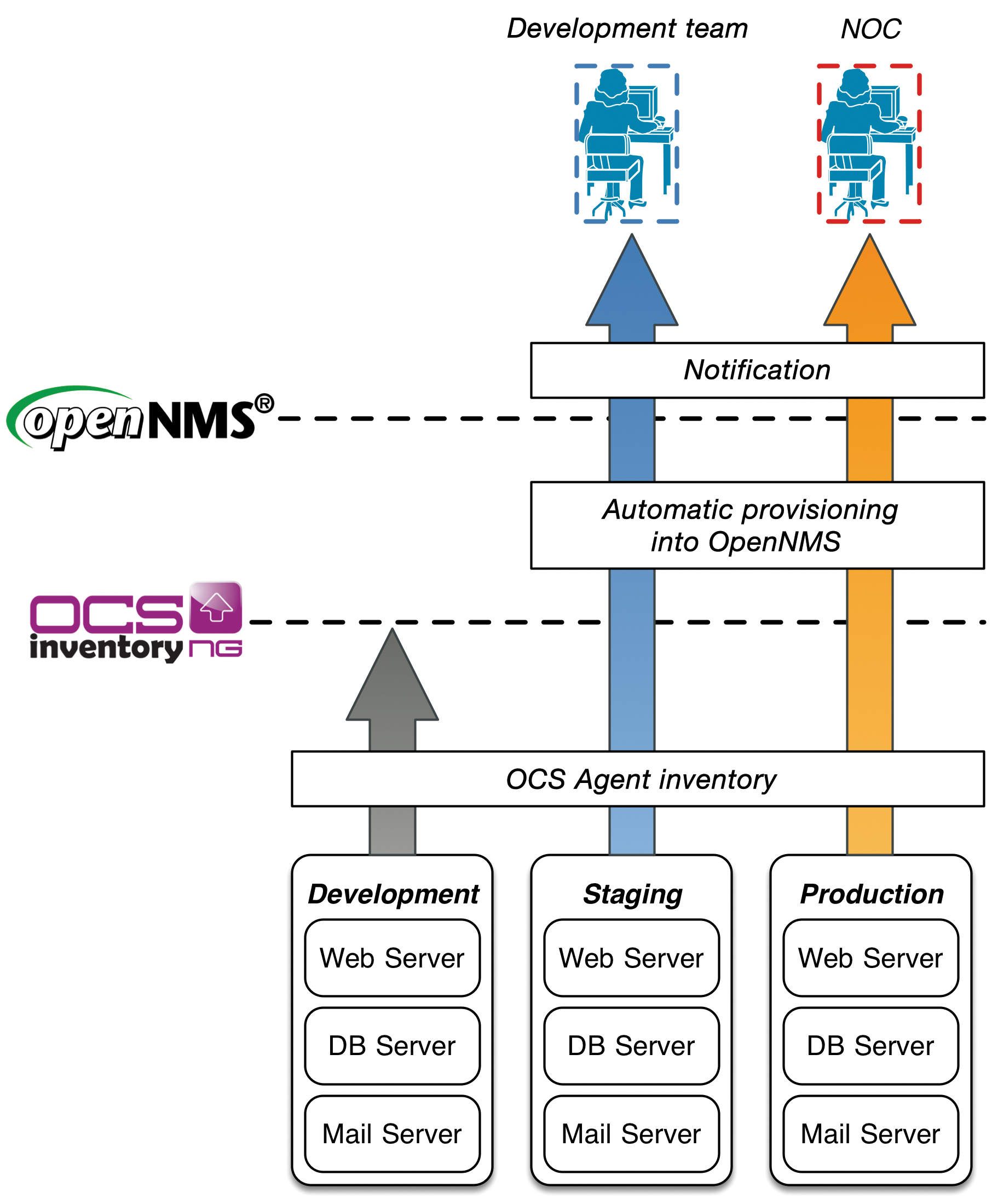

If you want to manage systems with OCS and automatically transfer the data for OpenNMS-based monitoring, a setup like the one outlined in Figure 1 is a good idea.

Installation of the OCS agents ensures that OCS actually sees the systems to be monitored later on. New server systems are provisioned in the special development environment. In this example, systems in the environment are uninteresting for monitoring in OpenNMS and are simply listed in the OCS inventory. Before server systems go into production, you need to test them in a staging environment. If there are any problems, the development team is informed. Messages about malfunctions affecting systems from the production environment are routed to the Network Operation Center. Monitoring is only interested in the systems in staging and production.

To show how monitoring profiles can be implemented for a specific server, the example distinguishes between mail, web, and database servers. It assumes that the stable version 1.12.3 of OpenNMS [6] is installed, along with OCS version 2.1RC1 [7] on a CentOS 6.5 server.

Interaction Between the Components

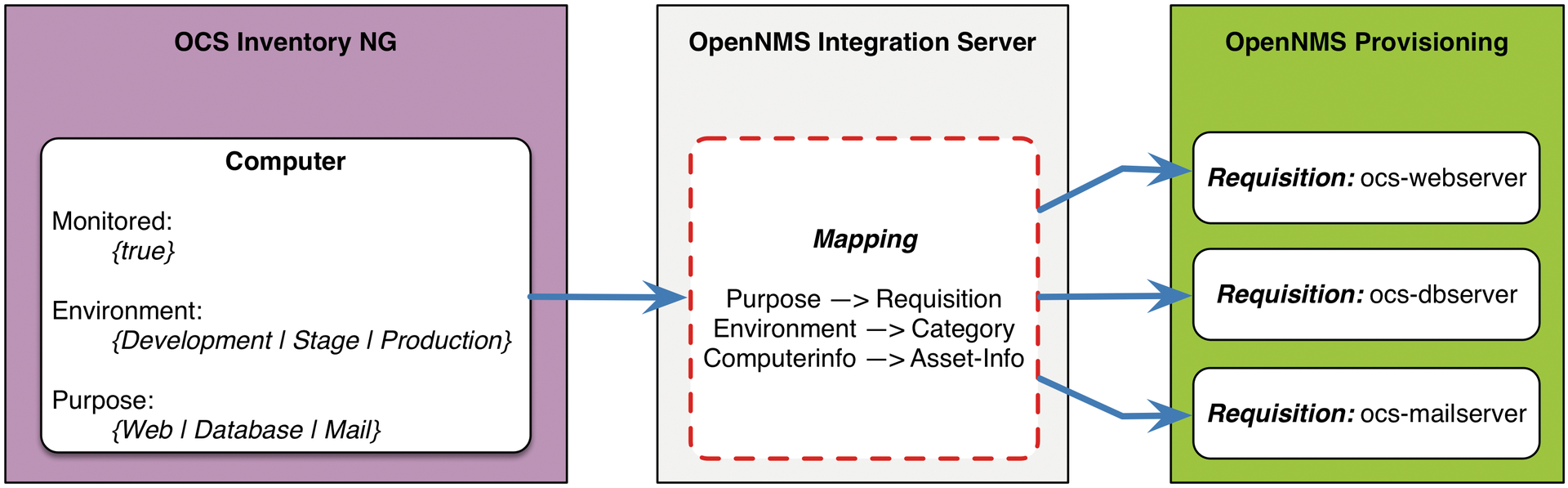

Besides the two applications, OpenNMS and OCS, you also need a Provisioning Integration Server (PRIS). The easily extensible server extracts the OCS data model and converts the data into the OpenNMS node data model. On one side, the data is parsed via the OCS SOAP interface, and on the other side, they are provided in XML format via HTTP to provisiond in OpenNMS. In the transformation process, certain rules and conditions can be evaluated and applied. The default behavior is already defined on the PRIS; it relates to the network interface and inventory information. To implement the process as shown in Figure 1, you need to create three user-defined attributes in OCS that can be evaluated by the PRIS.

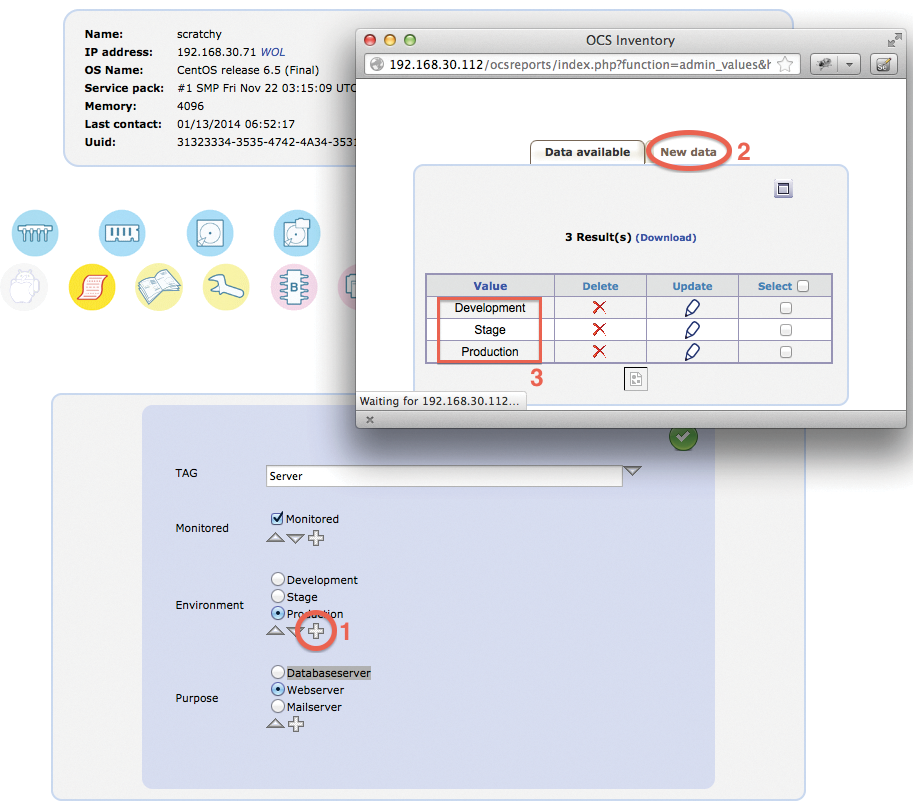

Figure 2 shows which attributes are used to manage monitoring in OpenNMS. The OCS attributes have the following meanings:

- Monitored: Specifies whether the system is relevant for monitoring.

- Environment: Specifies the environment in which the system is located.

- Purpose: Specifies the tasks the server performs.

For each purpose, an OpenNMS data source (requisition) is created. It specifies which specific service detectors are needed for web, mail, and database servers. OpenNMS also maps the environment via surveillance categories. These later allow the assignment of different notification paths for the network operations center or the development team.

Enabling SOAP

When you install OCS, two steps are necessary: The first step is to activate the SOAP web service; in the second step, you define the custom attributes. To activate the SOAP web service, you need to edit the Perl environment variable in /etc/httpd/conf.d/z-ocsinventory-server.conf as follows:

# === WEB SERVICE (SOAP) SETTINGS === PerlSetEnv OCS_OPT_WEB_SERVICE_ENABLED 1

The web service interface is configured for basic authentication against a user file. To generate the user file, run the command:

htpasswd -c /etc/httpd/APACHE_AUTH_USER_FILE SOAP_USER

In the next step, the custom attributes for the OCS computers are created.

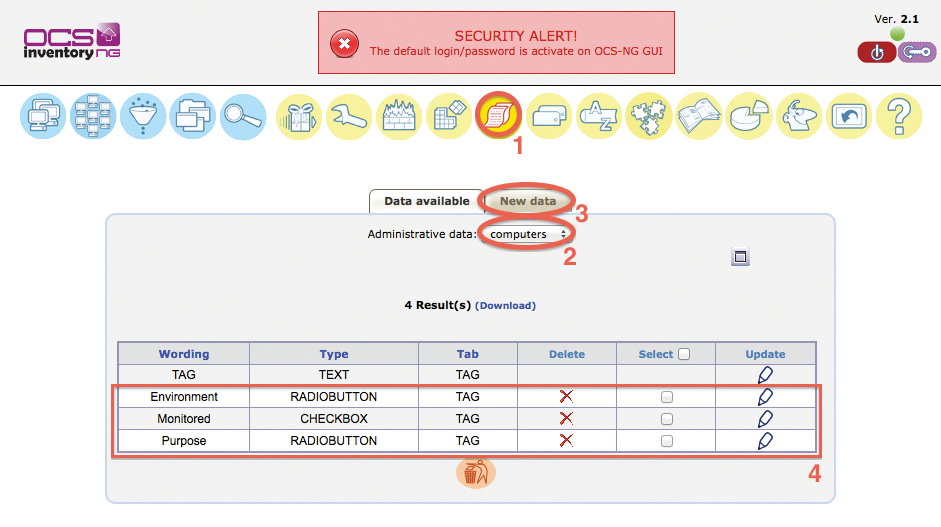

Creating Custom Attributes

The description is based on Figure 3. The attributes are created in the main menu under Administrative data (1). To do this, check the computers box (2). Then, use the New data tab (3) to add the fields with the appropriate data types. The results should be similar to those shown in Figure 3(4).

To provide selection options for the generated fields, you need to open the detailed view of a previously inventoried computer. You can now edit the attributes as shown in Figure 4. Pressing the plus symbol (1) opens the dialog for adding an attribute. Then, select New data (2) and create the environments: Development, Stage, and Production. You should create the attributes for the environments as shown in the table (3). For each additional new server, you can use these three selection fields to define how they are handled in OpenNMS monitoring.

This completes the changes in OCS, and you can move on to configure PRIS and OpenNMS itself.

Installing the Integration Server

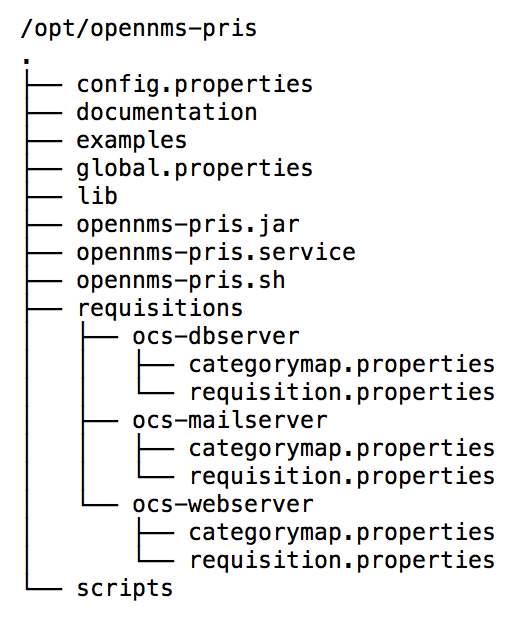

Installing PRIS is an easy process via the OpenNMS Yum and Debian repositories. The Java source code is also available and can be compiled itself. The installation options are described in the OpenNMS wiki for OCS integration [8]. In this example, the PRIS is installed on the same server as OpenNMS. The server listens on the local address 127.0.0.1 on port TCP/8000 and provides the data from the servers to be imported in XML format. The structure of the PRIS configuration files is shown in Figure 5.

The directories ocs-dbserver, ocs-mailserver, and ocs-webserver represent the configuration for OpenNMS requisition as outlined in Figure 2. The basic function of PRIS is described in the global.properties file (see Listing 1). The driver parameter indicates that HTTP output is expected. The host and port parameters let you define the network interface and port on which to listen for incoming connections.

Listing 1: global.properties

01 ### start an http server that provides access to requisition files 02 driver = http 03 host = 127.0.0.1 04 port = 8000 05 06 ### file run to create a requisition file in target 07 #driver = file 08 #target = /tmp/

In the ocs-webserver subdirectory, you will find a requisition.properties with a structure as shown in Listing 2. This determines the OCS server from which to retrieve the data. The OCS server in this example has the address 192.168.30.112, and the SOAP interface can be addressed with the SOAP_USER account and a password of mypass.

Listing 2: requisition.properties

01 ### SOURCE ### 02 ## connect to a real ocs and read computers 03 source = ocs.computers 04 05 ## test with static files, no network calls 06 #source = ocs.computers.replay 07 #file = computers.xml 08 09 ## OCS SOURCE PARAMETERS ## 10 source.url = http://ocs-server.mydomain.com 11 source.username = SOAP_USER 12 source.password = mypass 13 source.checksum = 4867 14 source.tags = Server 15 16 ### MAPPER ### 17 ## Run the default mapper for computers 18 mapper.ocs.url = http://ocs-server.mydomain.com 19 mapper = ocs.computers 20 mapper.accountinfo = MONITORED.Monitored PURPOSE.Webserver 21 22 ### CATEGORIES ### 23 mapper.categoryMap = categorymap.properties

The ocs.accountinfo parameter specifies that only OCS computers are selected for the ocs-webserver requisition that have Monitored set with a purpose of Webserver. A similar directory is created for mail and database servers. In the respective requisition.properties files, the parameters for ocs.accountinfo are adjusted to reflect the intended use: Mailserver and Databaseserver.

The mapper parameter determines which inventory information from OCS is transferred to OpenNMS. If the standard procedure is not enough, you can specify your own Groovy script to map the data. In the example, this step is unnecessary.

The last parameter, categoryMap, defines a file in which the environment is assigned for the OpenNMS surveillance category. In the present scenario, the definition stipulates that the environments for Production and Stage are assigned to the same surveillance categories in OpenNMS. The categoryMap.properties file looks identical for mail, web, and database servers:

ENVIRONMENT.Production=Production ENVIRONMENT.Stage=Stage

The PRIS uses a URL of http://localhost:8000/requisitions/ocs-webserver to provide server data for the import as per the configuration from the ocs-webserver. The path used here, /ocs-webserver, matches the directory created on the filesystem. Accordingly, the paths /ocs-dbserver and /ocs-mailserver take you to the categorized mail and database servers from OCS. This completes the configuration of the integration server; in the next step, OpenNMS is set up for automatic synchronization.

Importing with provisiond

To import the systems into OpenNMS and sync with OCS automatically, you need to configure OpenNMS provisiond. To do this, you need to edit the provisiond-configuration.xml file in the /opt/opennms/etc directory, as shown in Listing 3.

Listing 3: provisiond-configuration.xml

01 <?xml version="1.0" encoding="UTF-8"?> 02 <provisiond-configuration 03 xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" 04 xsi:schemaLocation="http://xmlns.opennms.org/xsd/config/provisiond-configuration" 05 06 foreign-source-dir="/opt/opennms/etc/foreign-sources" 07 requistion-dir="/opt/opennms/etc/imports" 08 09 importThreads="8" scanThreads="10" rescanThreads="10" writeThreads="8" > 10 11 <!-- 12 http://quartz.sourceforge.net/javadoc/org/quartz/CronTrigger.html 13 Field Name Allowed Values Allowed Special Characters 14 Seconds 0-59 , - * / 15 Minutes 0-59 , - * / 16 Hours 0-23 , - * / 17 Day-of-month 1-31 , - * ? / L W C 18 Month 1-12 or JAN-DEC , - * / 19 Day-of-Week 1-7 or SUN-SAT , - * ? / L C # 20 Year (Opt) empty, 1970-2099 , - * / 21 --> 22 <!-- Import Web-Server --> 23 <requisition-def import-name="OCS-Webserver" import-url-resource="http://localhost:8000/requisitions/ocs-webserver"> 24 <cron-schedule>0 0 0 * * ? *</cron-schedule> 25 </requisition-def> 26 27 <!-- Import Mail-Server --> 28 <requisition-def import-name="OCS-Mailserver" import-url-resource="http://localhost:8000/requisitions/ocs-mailserver"> 29 <cron-schedule>0 0 0 * * ? *</cron-schedule> 30 </requisition-def> 31 32 <!-- Import Database-Server --> 33 <requisition-def import-name="OCS-Databaseserver" import-url-resource="http://localhost:8000/requisitions/ocs-dbserver"> 34 <cron-schedule>0 0 0 * * ? *</cron-schedule> 35 </requisition-def> 36 </provisiond-configuration>

The relevant sections here are for the requisition definitions (requisition-def), with entries for OCS-Webserver, OCS-Mailserver, and OCS-Databaseserver. This ensures that OpenNMS creates one requisition each for web, mail, and database servers. Each requisition configures the behavior for monitoring. Listing 3 also stipulates that the web, mail, and database servers are synchronized daily at midnight by a local PRIS on port 8000.

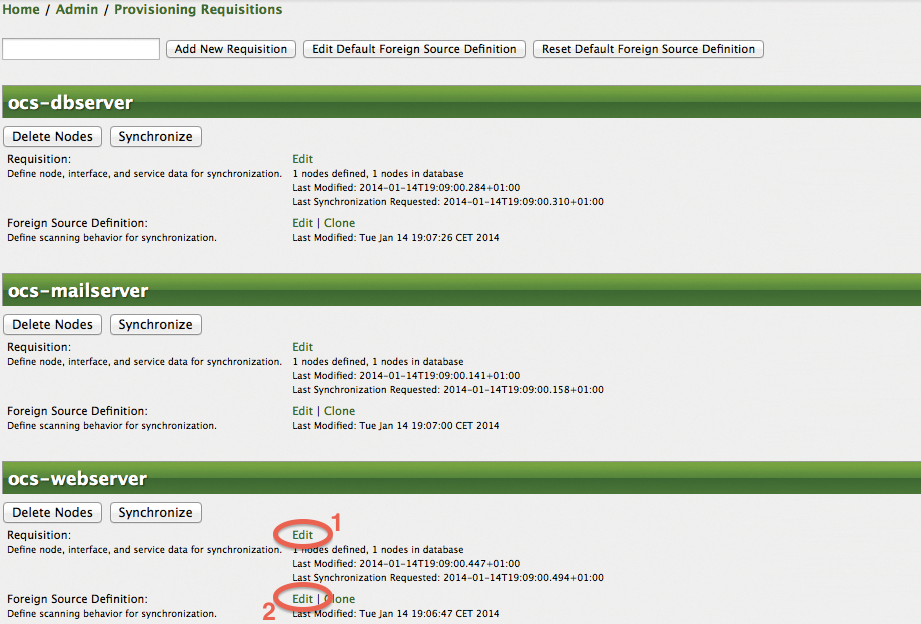

The next step creates the three requisitions in the OpenNMS web interface. For this purpose, select Admin in the main menu, and Manage Provisioning Requisitions. Then, you can use the input field to create the three requisitions – ocs-webserver, ocs-mailserver, and ocs-dbserver – as shown in Figure 6. To create a profile that determines how the systems are handled on importing, you now need to configure the service detectors for each requisition. To do this, you need to specify which service detectors to use in Edit under Requisition (1).

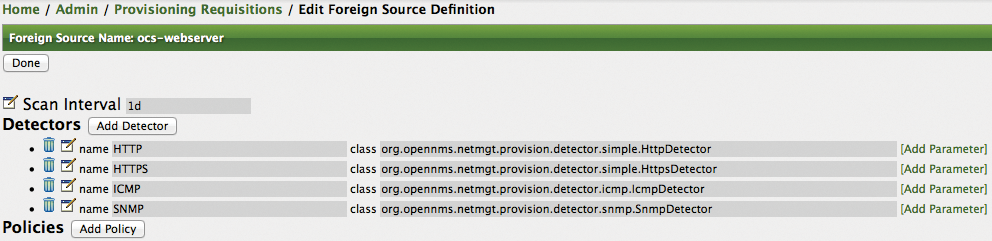

When a requisition is created, all available service detectors are assigned. Thus, it's useful to select only the service detectors that are actually meaningful for mail, web, and database servers. Figure 7 shows that the configuration for web servers keeps only the ICMP, HTTP, HTTPS, and SNMP service detectors and deletes all the others. The group of mail and database servers keeps service detectors, such as SMTP, IMAP, POP3, and JDBC.

This configuration results in OpenNMS checking, when importing from OCS, whether HTTP or HTTPS, for example, are available over the network from the server to be imported. If so, the services are automatically mapped and automatically integrated into the monitoring setup.

As seen in Figure 6, you can also use the Foreign Source Definition Edit (2) to define policies for a requisition. You can decide how to handle the performance data import or whether to apply rule-based categorization of the systems. However, this step is not relevant to the present example. Further information on policies can be found on the OpenNMS wiki in the Provisioning Users Guide [9].

Notifications

So far, you have seen how to set up automatic synchronization between OCS and OpenNMS. OCS can control which servers are to be imported automatically into OpenNMS. OpenNMS uses requisitions to apply a monitoring profile consisting of service detectors for web, mail, or database servers. You also need some notifications for the NOC and the development team. To be able to send mail, OpenNMS needs to communicate with a mail server. The basic configuration for sending mail is described in detail on the OpenNMS wiki in the Notification Tutorial section [10].

When importing, we already stipulated that the OCS Environment attribute should be assigned as a surveillance category on the OpenNMS nodes. You can now use this surveillance category as a notification filter.

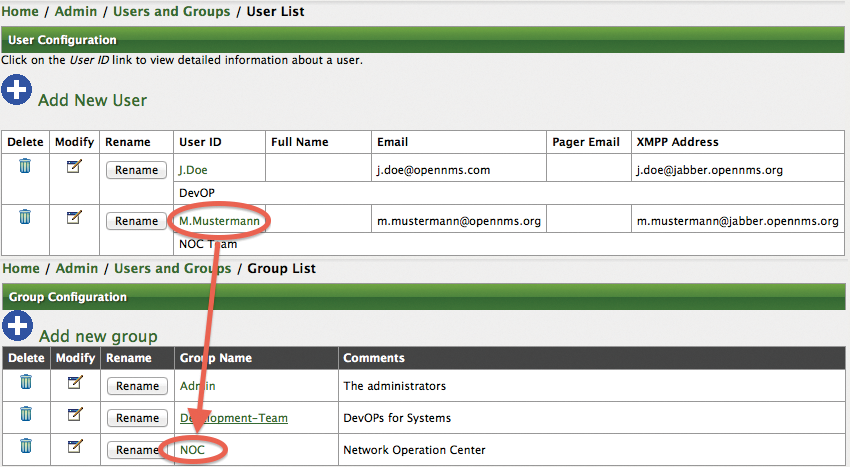

The two teams – development and NOC – are mapped via groups in OpenNMS. Appropriate OpenNMS users from the teams are assigned to each of the two groups. The contact information – including the OpenNMS user's email address – can be used for notification. Setting up OpenNMS users and groups is shown in Figure 8. You can do this in the Admin | Configure Users, Groups and On-Call Roles administration section of the web interface.

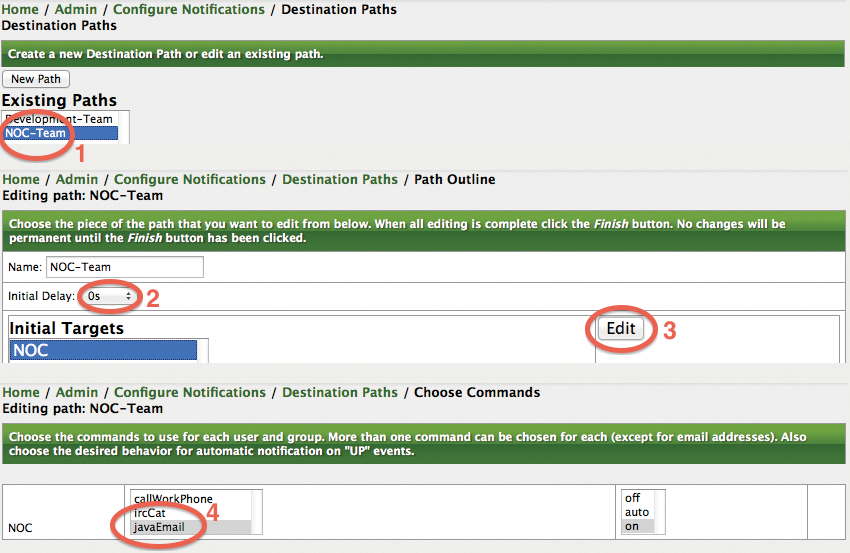

The next step creates two paths for the notification, which is done in the Admin | Configure Notifications | Configure Destination Paths administration section of the web interface. We generated two paths here, as shown in Figure 9(1). The newly created path is edited and selected as a target for the NOC user group. The Initial Delay (2) configuration determines how long to wait after a failure before OpenNMS notifies the group. When editing the path (3), make sure you select the value javaEmail (4) for the notification command.

In the last step, the notification is configured. Any errors will generate an event in OpenNMS. Events are identified by an identifier – the UEI. In this example, the administrator configures a notification for a nodeLostService event. This notification is generated when, for example, a server is still reachable on the network using ICMP, but a service like HTTP is unresponsive.

To this end, you need to edit the notification for nodeLostService in the Admin | Configure Notification | Configure Event Notifications administration section of the main menu. You can then see the event identifier (UEI) for which the notification was created. To confirm the pre-selection, press Next; you are then prompted to specify the systems and services to which the notification applies.

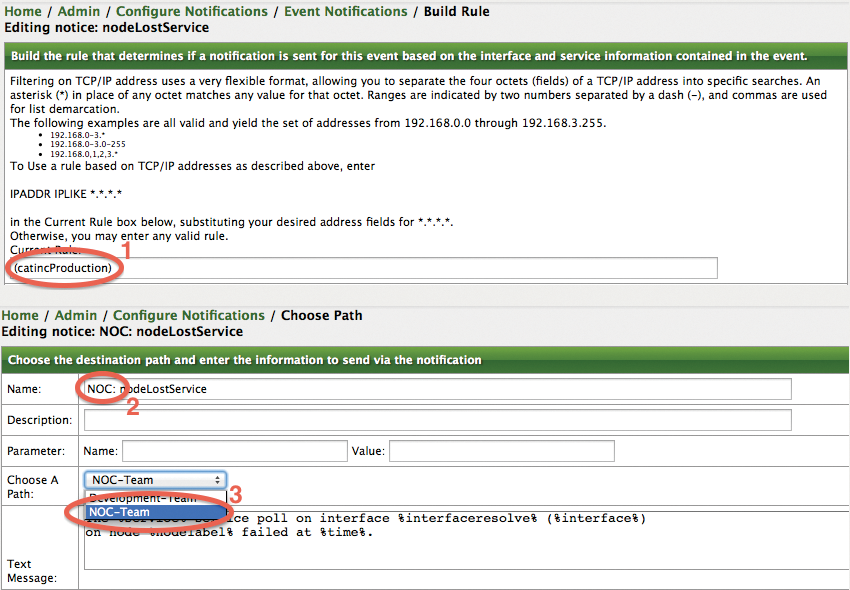

Figure 10(1) shows which filters you should enter for the production environment. The rule shown here – catincProduction – uses the catinc prefix. This prefix stands for "category include" and is equivalent to categoryName == 'Production'. Important information: Spaces and non-standard characters should be avoided in surveillance categories.

The filters and rule evaluations can cause problems in some places. The Validate rule results selection provides details of the systems to which to apply the filter rule. After you select Skip validation results, the wizard then continues.

Figure 10(2) shows that the designation of nodeLostService has changed to NOC:nodeLostService. You can thus see in the user interface that this notification is intended for the NOC team. Under Choose A Path, you then select the previously created notification path, NOC-Team.

If so desired, the actual notification text can be changed here. A text frame can be populated with variables such as %%nodelabel. When a fault occurs, these variables are then replaced with the values for a specific system.

For safety's sake, you should check that the notification status is set to On below Admin | Notification Status. You can also disable individual notifications. For this reason, you will want to enable NOC:nodeLostService below Admin | Configure Notifications | Configure Event Notifications.

To notify the development team, a second notification is produced for the nodeLostService event based on the same pattern. As shown in Figure 10, you only enter catincStage as a filter rule. For the notification itself, you can use a name of Dev:nodeLostService. Finally, you need to set the target path for notifications to Development-Team.

Conclusions

The interaction of the OCS and OpenNMS tools shows how free applications benefit from open interfaces. Beyond the example described here, you could conceivably set the OCS attributes during the installation of the OCS agents, when rolling out the systems involved with Puppet [11] or Chef [12].