Set up and operate security monitoring throughout the enterprise

Seeing Eye

Everyday, IT operations generate a myriad of data in which much security-relevant information is hidden. However, it is impossible to extract any meaningful information from this flood of data manually. Security Information and Event Management (SIEM) systems therefore are designed to give administrators improved insights into the IT security status across an organization. This can only work if the people responsible for IT observe several important basic rules when designing the SIEM system and the sensor architecture. In this article, we introduce readers to the fundamental points to be considered when choosing a SIEM system and designing its interaction with data sources and downstream systems.

Well-organized monitoring does not just cover classic indicators such as availability, use levels, and service and system response times; it also reveals the current status quo in terms of IT security. An important precondition is that security-relevant logfile entries from applications and dedicated security components, such as Intrusion Detection Systems (IDSs), are centrally collated, correlated, and holistically evaluated. SIEM systems specialize in performing this task, and several open source and commercial tools have asserted themselves in this field.

Important Selection Criteria

As with other monitoring tools, functionality, scalability, and cost are the three obvious criteria for selecting a SIEM product. In terms of functionality, the products mainly differ in terms of features whose usefulness and necessity should be investigated within the context of your own application scenario. Unfortunately, this rule also applies to missing features: For example, pervasive support for IPv6 is still rare even nearing the end of 2015.

Scalability is typically measured as the number of security messages per second that the SIEM can field and process. Some open source and community editions have been deliberately reduced to a two-digit number of events per second (EPS) and are thus only suitable for smaller environments or for very selective deployment. For commercial products, which receive net flows from routers, six-digit EPS counts are not unknown, and they are often used as the basis for calculating license costs.

SIEM Architecture

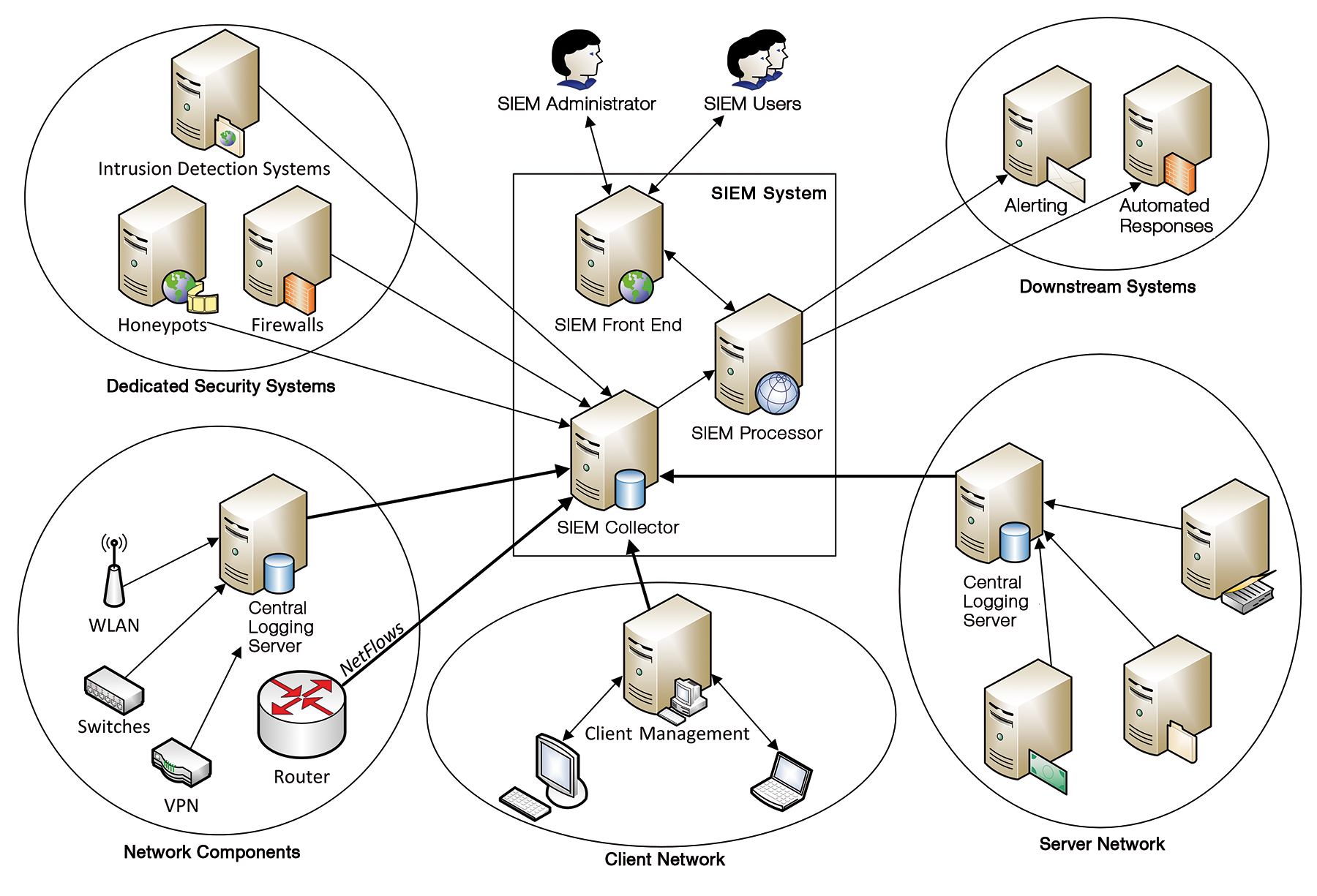

Figure 1 shows the typical architecture of a SIEM system: It uses SIEM collectors to integrate a variety of data sources (e.g., servers, network components, management systems, firewalls, and IDSs) and converts their security messages into a uniform, SIEM-internal format. SIEM processors correlate these messages across all systems and evaluate them based on rules.

Like most state-of-the-art products, the SIEM front end is web-based; administrators use it to configure the system and evaluate identified security issues interactively. Most SIEM systems manage these in a kind of ticket system, thus supporting drill-down views of the triggering messages from the data sources. Downstream systems can be actuated for alerts and automated responses. A scripting interface or an API, which will be more or less powerful depending on the product, lets administrators manage the SIEM data sets with their own code to complement interactive work with the SIEM system.

Tasks of SIEM Basic Configuration

The preconditions for this include, for example, populating the SIEM's internal asset management set – ideally by connecting the SIEM to an existing DCIM solution or configuration management system. The criticality of the individual systems and basic properties, such as the operating system and the position on the monitored network, can be derived from this. When an IDS reports an attack that exploits a current Windows vulnerability, the SIEM system can respond differently if the target happens to be a critical Windows server on the production network than if it were a Linux server in the test lab.

The overhead for creating this basic information is high, especially if the data cannot easily be imported from existing systems. As an alternative, many products include an automatic discovery function, but you should only use this if you can be sure of avoiding inconsistencies with other data sets. Inconsistencies can cause more overhead in production than you would save compared with some other kind of import or manual entry.

You will also want to define up front which users can work with the SIEM system and what authorizations they will have. Connections to central authentication instances, such as an LDAP server or Active Directory, are part of the standard feature set. Because drill-down options can reveal sensitive details from logfiles and IP packets, the need-to-know principle will drive many individual authorizations.

Many SIEM front ends give the user neat-looking dashboards with overviews and statistics, which are also likely to interest managers and decision makers. However, you will not want to confuse this user group with in-depth technical details and processing options. Because major retroactive changes to roles and authorizations can have unwanted side effects, it makes sense to experiment with the intended settings in a trial set up.

Using the Right Data Sources

Connecting the actual SIEM data sources follows two similar goals that build on one another. For one thing, the security messages of the maximum possible relevant systems should be collected at a central point. This reduces the overhead on each individual system when searching through logfiles for security-relevant entries, for example. Moreover, you will want cross-system correlation to be able to detect and understand more complex attack patterns, whose individual components could be lost in the background noise on the individual systems.

You have various options for transferring the data from the individual sources to the SIEM system – from configuring the SIEM system as a central logging server, through installing SIEM agent software on the source systems, to regularly polling entire logfiles with some help from secure copy or SFTP. Each SIEM system comes with support for widespread logfile formats out of the box. Logfile entries with any other formatting will necessitate an additional parser configuration, which can be created using a simple rule-based language or regular expressions, depending on the SIEM vendor.

Data sources are typically cascaded hierarchically in the connection to the SIEM system: For example, if you already have a centralized logging server that several servers and services use, only the logging server is connected to the SIEM system rather than each individual server. If, for example, you need to evaluate malware found on workplace PCs and other clients, it makes more sense to connect the central antivirus management server to the SIEM system than to connect each individual client.

Independent of the limits set for evaluating the data volume, when you introduce a SIEM solution you need to consider which data sources actually make sense. As a general rule, you should only integrate sources whose messages can be evaluated automatically through analysis and correlation rules or that are regularly of interest when you check and process security incidents manually. For SIEM products by vendors of network equipment in particular, the typical feature set includes the ability to feed in net flow information from IP routers in addition to various logfiles from servers, network components, and security components.

Some vendors combine their SIEM systems with deep packet inspection-capable IDSs and can thus handle Layer 7 evaluations. The benefit here is the integration of various security functions in a common user interface. For example, if you notice – from communication with a known command-and-control server on the Internet – that a system is compromised and infected with malware, you can quickly identify any other unusual communication targets the machine has had.

In addition to more passive actions like receiving or polling security messages from the data sources, many SIEM systems also include active mechanisms for information acquisition – or a least you can retrofit these as modules. The spectrum ranges from relatively simple port scanners like Nmap up to complete vulnerability management solutions in which the monitored systems can be periodically, or at the push of a button, checked through scanning with tools such as OpenVAS. The findings you gain here will not just help with investigating reported security incidents, they are also stored in the SIEM's internal asset database and can be referenced for analyses and correlations later on – in particular for prioritizing the assessment of alerts.

A Neat GUI Is Not Everything

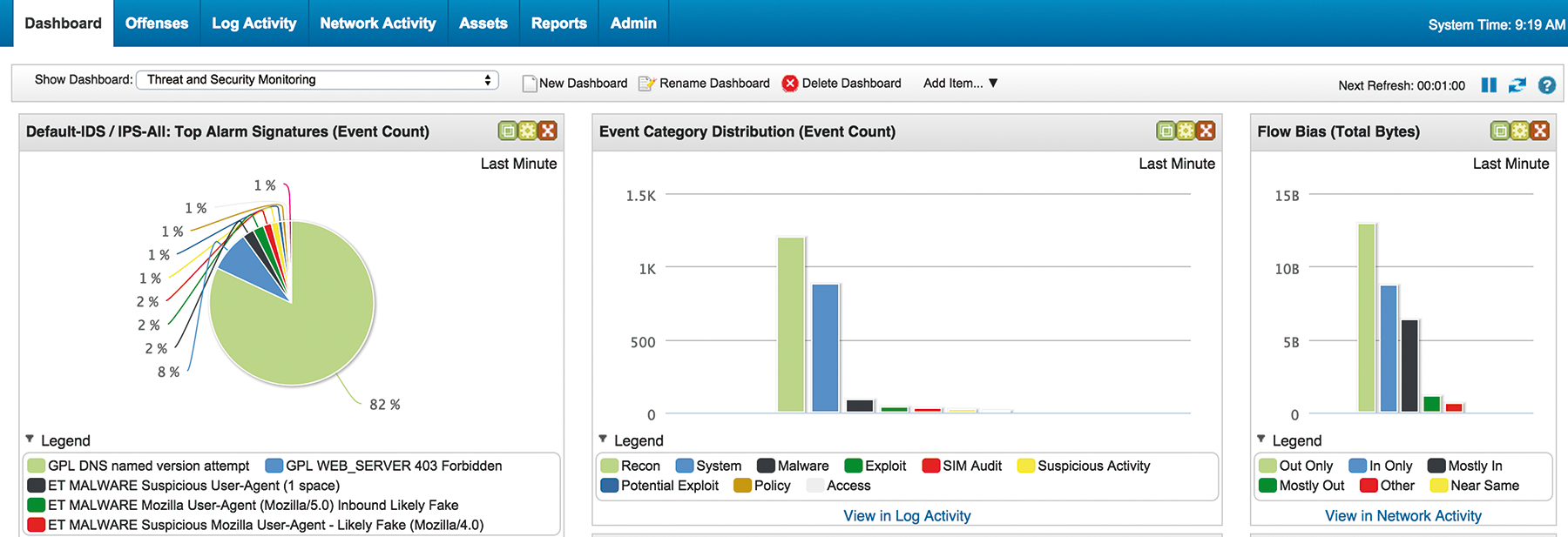

The application focus of today's SIEM products is interactive evaluation of identified security problems. SIEM products differ greatly in terms of their control philosophy and the technologies used to design the user interface (Figure 2). Intuitive and efficient handling of the SIEM GUI is thus typically an important acceptance criterion for a SIEM product – unresponsive web interfaces or the lack of support for right-clicking – can quickly become productivity killers.

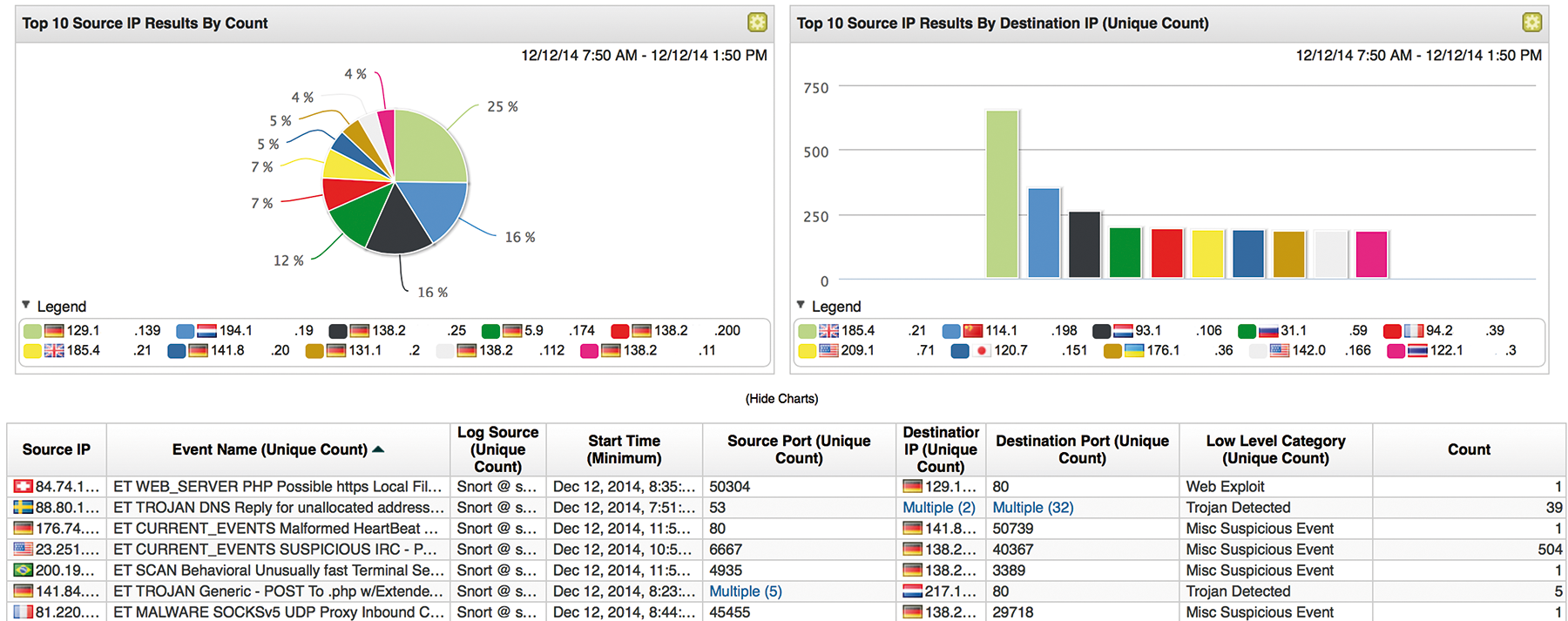

In addition to processing security incidents, a main aspect of daily work with the SIEM system is ongoing optimization of the rulesets, with a view to avoiding unnecessary messages and false positives and thus allowing the security team to focus on the genuinely important cases (Figure 3). This means that you want in-depth information, the ability to find similar cases, and the ability to trace precisely why the message was thrown at all, based on analysis and correlation rules, in the SIEM GUI. In acute cases, in which you can monitor ongoing attacks, the latency with which new messages can be viewed in the SIEM GUI will also be critical. More or less real-time-capable SIEM systems have advantages over products that only process incoming data in batches at predefined intervals.

Automation Simplifies the Daily Grind

Administrators often lack the time to check the SIEM GUI regularly and proactively to see whether important new messages have arrived. SIEM systems thus typically provide a configurable escalation system that, for example, sends regular email to remind the administrator that important messages have not been processed. Another important feature is the ability to define exception rules to specify which types of incidents or affected systems should not generate alarms, thereby avoiding a flood of email.

Because the response to many security incidents will be very similar, it makes sense to automate at least the non-critical ones. Although some SIEM products (e.g., various monitoring systems) primarily serve as data sinks, others offer the ability to trigger arbitrary scripts and programs to which the individual parameters of the incident are passed or made accessible via a programming interface.

For example, if a PC on your network exhibits characteristic malware communication behavior, that PC could be blocked – in addition to alerting the responsible administrator – by a script at the Internet uplink firewall or directly at the switch port of the office in question, thus avoiding more serious damage. As with any automated response, you must make sure that an attacker cannot misuse this response to cause even more damage (e.g., through a denial-of-service attack).

Creating Meaningful Rulesets

In addition to a precisely defining your own infrastructure and asset management, you will either need to modify what are typically large numbers of correlation rules provided by the SIEM solution's vendor to suit your own environment and specific scenarios or to create your own enterprise-specific rulesets (Figure 4). Put simply, correlation rules describe the conditions that a reported incident must meet as well as the automatically triggered response of the SIEM solution if all conditions of a rule are met.

A very simple condition could be the number of a specific incident type, such as communication with a command-and-control server on a known botnet, identified by an IDS and forwarded to the SIEM, from the same IP source address within a specific period of time.

If the incident count exceeds the threshold defined in the correlation rules, the response described in the same rule is triggered – for example, notifying the responsible administrator by email, calling a script, or generating a new event that is, in turn, passed to the solution for additional correlation steps. Beyond simple counters, complex and nested conditions can be grouped to create a rule (Figure 5).

To avoid the risk of an email flood, the threshold parameter can be used to reduce the number of notification email sent per minute based on the target IP address. In practice, signature-based detection methods that evaluate the content transferred between two systems on the fly have several weaknesses. The communication between a malware-infected IT system and the control server will typically be encrypted. The payload transferred here is thus not visible as clear text, and the security-relevant event is not easy to identify. Net flow-based correlation, as offered by one SIEM solution, is a detection option that alerts the system operator when the SIEM-defined threshold is exceeded or in case of deviation from the typical communication behavior as self-taught by the SIEM solution.

Alerting can also occur in multiple stages. For example, the first occurrence of a specific anomaly only generates another event, although this can already be correlated. If the same anomaly occurs multiple times within a specific period or in combination with other correlated events, then it triggers an email or text message to the system owner. Different responses can be defined depending on the time of day by evaluating the point of time at which the incident occurred. For example, the system administrator can be notified by email during office hours, whereas nighttime communication can be stopped automatically by a script-controlled reconfiguration of the switch port or creation of specific firewall rules.

Precisely Planning Updates

In addition to updating all connected data sources, it is important to update the SIEM solution regularly – particularly for security updates or new software versions. In addition to providing bug fixes and resolving existing vulnerabilities, the changes will typically focus on processing new or modified log formats or supporting a more efficient option for integrating data sources. Before you upgrade your SIEM solution, you will want to think through your approach. Backing up all events and the current SIEM configuration before the update is no more than best practice. Because the upgrade can take some time, you will want to ensure that data sources can briefly cache incidents that occur while the SIEM solution is temporarily unavailable.

You will need a robust response to interruptions caused by updates but also to memory leaks existing in software or buffer overflows caused by programming errors. With this in mind, you should establish integrated system monitoring mechanisms and, above all, remote system monitoring to keep track of the current system status and to be able to take appropriate actions in good time.

Conclusions

The importance of SIEM systems for the safe operation of large, heterogeneous and distributed infrastructures is increasing. With their ability to correlate, prepare, and automatically process security messages from various services, systems, and components, SIEM systems are a powerful tool. However, introducing a SIEM system and going live is not something to be taken lightly because of the complexity involved. On the contrary, you will need to invest time and care in choosing a product, planning the basic configuration, integrating data sources, and configuring analysis rules and automated mechanisms to leverage the full potential of your SIEM.

Although many products are maturing, the downside is that the ability to control downstream systems still needs to be scripted in-house to a great extent. It is hoped that the products will continue to develop in this respect in the future.