The role of the Linux Storage Stack

Stacking Up

In the storage stack context, the end users are typically normal applications (userspace programs/applications). The first component with which Linux programs interact when processing data is the virtual filesystem (VFS). Only through the VFS is it possible to invoke the same system calls for different filesystems on different media. Using VFS, for example, a file is transparently copied for the user from an ext4 to an ext3 filesystem using the cp command.

The variety of filesystems – block-based, cross-network, pseudo-, and even Filesystems in Userspace (FUSE) – demonstrates the numerous possibilities that VFS encapsulation opens up. The aforementioned system calls are unified functions, such as open, read, or write, no matter what filesystem is hidden underneath. The specific filesystem operations are abstracted by the VFS, and caches – including the directory entry (dentry) cache – speed up file access.

The next layer of the storage stack consists of individual filesystem implementations. They provide the VFS with generic methods and translate them into specific calls for accessing the device. The filesystem also performs its primary task – organizing data and metadata for an underlying storage medium. The Linux kernel also speeds up access to these media with a caching mechanism – the Linux page cache.

Block I/O

Flexible block I/O structures (BIOs) are used instead of pages for administration in the kernel. The structures represent block I/O operations or queries that the kernel is currently executing (in-flight BIOs). This applies both to I/O on the page cache and to direct I/O (i.e., access that bypasses the page cache). The advantages of BIOs is in handling multiple segments involved in the current I/O operation.

BIOs consist of a list or a vector of segments that points to different pages in memory. This results in a dynamic allocation of pages to the sectors on the block device via block I/O. BIOs come up again with respect to the block layer. BIOs are grouped into requests before the kernel passes I/O operations to the driver's dispatch queue.

Stacked Block Devices

An important component of the Linux Storage Stack is located in front of the block layer, where logical block devices are implemented. They may operate with conventional devices, but they also perform additional functions, such as layering logical devices on top of each other (i.e., stacked devices). The Logical Volume Manager (LVM) and software RAID (mdraid) are probably the best known representatives. For example, they allow you to scale logical volumes beyond the limitation of physical disks or to store data redundantly. Stacking is often used in RAID and LVM combination disks. As you can see, you have to go through several steps before getting to the block layer.

Block Layer

The block layer processes the BIOs described above and is responsible for forwarding application I/O requests to the storage devices. It provides the applications with uniform interfaces for data access, whether this involves a disk, an SSD, Fibre Channel (FC) SAN, or other block-based storage device. The block layer also provides a uniform access point to all applications for memory devices and their drivers. In this way, it conceals the complexity and diversity of storage devices from applications.

The block layer also ensures an equitable distribution of I/O access, appropriate error handling, statistics, and a scheduling system. The latter is particularly intended to improve performance and to protect users from performance disadvantages caused by poorly implemented applications or drivers.

Depending on the respective device drivers and configuration, the data can take three paths in or around the block layer:

- Via one of the three traditional I/O schedulers: NOOP, Deadline, or CFQ. The formerly fourth traditional I/O scheduler (i.e., the anticipatory scheduler, or AS) was similar to CFQ and was therefore removed in kernel version 2.6.33.

- Via the Linux multiqueue block I/O queuing mechanism (blk-mq). Introduced with Linux kernel 3.13, blk-mq is relatively new. The Linux kernel developers mainly developed it for high-performance flash memory, such as PCIe SSDs, to break through the IOPS (I/O operations per second) performance limitations of traditional I/O schedulers.

- Diverting around the block layer directly to BIO-based drivers. In the period before blk-mq, manufacturers of PCIe SSDs developed these elaborate drivers, which have to implement a lot of the block layer tasks themselves.

I/O Scheduler

The traditional I/O schedulers NOOP, Deadline, and CFQ are certainly the most important schedulers used in current Linux distributions. Even the newest distributions, like Debian 8, are still in kernel version 3.16, which for blk-mq, only supports a virtual driver (e.g., virtio-blk for KVM guests) and a "real" driver (mtip32xx for the Micron RealSSD PCIe) in addition to a test driver (null_blk).

Traditional I/O schedulers work with the following two queues:

- Request queue: In this queue, the requests used by processes are collected, possibly merged, and sorted by the scheduler based on the scheduling algorithm. The schedulers use different approaches to deal with individual requests and to pass them to the device driver.

- Dispatch queue: This queue includes organized requests that are ready for processing on the storage device. It is managed by the device driver.

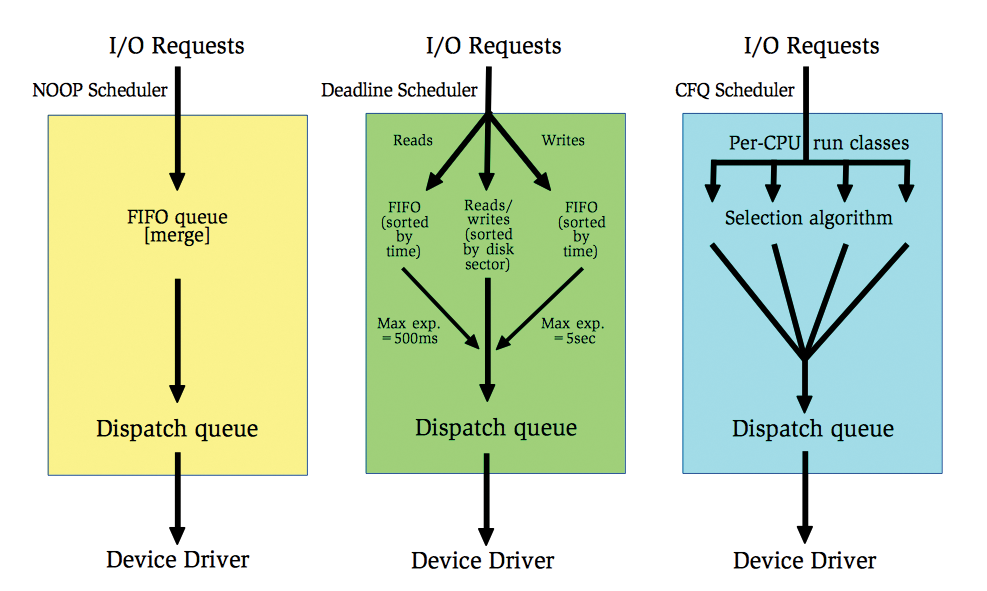

NOOP is a simple scheduler that collects all I/O requests in a FIFO queue (Figure 1, left). It performs a merge to optimize the requests and thus reduces unnecessary access times. However, it doesn't sort the requests. The device driver also processes the dispatch queue using FIFO principles. The NOOP scheduler doesn't have any configuration options (tunables).

The Deadline scheduler (Figure 1, center) tries to save requests from "starvation." For this purpose, it takes note of an expiration time for each request and pushes it into respective read and write queues sorted by time (deadline queues). The Deadline scheduler gives preference to read over write accesses (the default is 500ms for reads compared with 5s for writes). A "sorted queue" keeps all requests sorted by disk sector, to minimize seek time, and feeds requests to the dispatch queue unless a request from one of the deadline queues times out. An attempt is therefore made to guarantee a predictable service start time. This scheme is advantageous because read accesses are mainly issued synchronously (blocking) and write accesses asynchronously (non-blocking).

The CFQ (Completely Fair Queuing) scheduler (Figure 1, right) is the default scheduler for the Linux kernel, although some distributions use the Deadline scheduler instead. CFQ sets the following objectives:

- Fair distribution of the available I/O bandwidth to all processes of the same priority class via time slices. "Fair" refers to the length of the time slots, not the bandwidth; that is, a process with sequential write access will obtain a higher bandwidth than a process with randomly distributed write access in the same time slot).

- The possibility of dividing processes in priority or scheduling classes (e.g., via

ionice). - Periodic processing of process queues for distributing latency. The time slots allow a high bandwidth for processes that can initiate many requests in its slot.

Beyond the Scheduler

Blk-mq is a new framework for the Linux block layer. It was introduced with Linux kernel 3.13 and completed in Linux kernel 3.16. Blk-mq allows more than 15 million IOPS on eight-socket servers for high-performance flash devices (e.g., PCIe SSDs), although single-socket and dual-socket servers also benefit from the new subsystem [1]. A device is then controlled via blk-mq if its device drivers are based on blk-mq.

Blk-mq provides device drivers with the basic functions for distributing I/O requests to multiple queues. The tasks are distributed with blk-mq to multiple threads and thus to multiple CPU cores. Blk-mq-compatible drivers, for their part, inform the blk-mq module how many parallel hardware queues a device supports.

Blk-mq-based device drivers bypass the previous Linux I/O scheduler. Some drivers did that in the past without blk-mq (ioMemory-vsl, NVMe, mtip32xx); however, as BIO-based drivers, they had to provide a lot of generic functions themselves. These types of drivers are on the way out and are gradually being reprogrammed to take the easier path via blk-mq (Table 1).

Tabelle 1: Driver with blk-mq Support

|

Driver |

Device Name |

Supported Devices |

Kernel Support |

|---|---|---|---|

|

null_blk |

|

None (test driver) |

3.13 |

|

virtio-blk |

|

Virtual guest driver (e.g., in KVM) |

3.13 |

|

mtip32xx |

|

Micron RealSSD PCIe |

3.16 |

|

SCSI (scsi-mq) |

|

SATA, SAS HDDs and SSDs, RAID controller, FC HBAs, etc. |

3.17 |

|

NVMe |

|

NVMe SSDs (e.g., Intel SSD DC P3600 and P3700 series |

3.19 |

|

rbd |

|

RADOS block device (Ceph) |

4.0 |

|

ubi/block |

|

RADOS block device (Ceph) |

4.0 |

|

loop |

|

Loopback device |

4.0 |

SCSI Layer

From the block layer to the respective hardware drivers for driving some storage devices (e.g., for new NVMe flash memory in the NMVe driver, which has used blk-mq since kernel version 3.19). However, the path first leads to the SCSI midlayer for all storage devices that are addressed via a device file like /dev/sd*, /dev/sr*, or /dev/st*, which doesn't addressed just SCSI or SAS devices, but also SATA storage, RAID controllers, and FC HBAs.

Thanks to scsi-mq, it is possible to address such devices starting with blk-mq from Linux kernel 3.17. However, this path is disabled by default at the moment. Christoph Hellwig, the main developer of scsi-mq, does hope to have scsi-mq activated by default in future Linux versions in order to remove the old code path completely at a later date [2].

SCSI low-level drivers at the lower end of the storage stack are in fact the drivers that address the respective hardware components. They range from the general Libata drivers for controlling (S)ATA components via RAID controller drivers, such as megaraid_sas (Avago Technologies MegaRAID, formerly LSI) or AACRAID (Adaptec), to (FC) HBA drivers such as qla2xxx (QLogic), to the paravirtualized drivers virtio_scsi and vmw_pvscsi. For these drivers to deploy multiple parallel hardware queues via blk-mq/scsi-mq in the future, each driver must be adjusted accordingly.

Conclusions

The Linux Storage Stack implements all the requirements needed by an operating system to address current storage hardware. The modular design with well-defined interfaces ensures that the kernel developers can continue to develop and improve individual parts independently. This is also reflected in the new blk-mq, which will gradually take over from the previous I/O scheduler to address high-performance flash memory.

Specifically, the kernel developers are planning blk-mq support for the device mapper multipath driver (dm-multipath), I/O scheduler support in the blk-mq layer, and scsi-mq/blk-mq support for iSCSI and FC HBA drivers [3] in the next kernel versions.