DDoS protection in the cloud

Inside Defense

Attacks based on the distributed denial of service (DDoS) model are, unfortunately, common practice, often used to extort protection money or sweep unwanted services off the web. Currently, such attacks can reach bandwidths of 300GBps or more. Admins usually defend themselves by securing the external borders of their own networks and listening for unusual traffic signatures on the gateways, but sometimes they fight attacks even farther outside the network – on the Internet provider's site – by diverting or blocking the attack before it overloads the line and paralyzes the victim's services.

In the case of cloud solutions and traditional hosting providers, the attackers and their victims often reside on the same network. Thanks to virtualization, they could even share the same computer core. In this article, I show you how to identify such scenarios and fight them off with software-defined networking (SDN) technologies.

Detecting Space Invaders

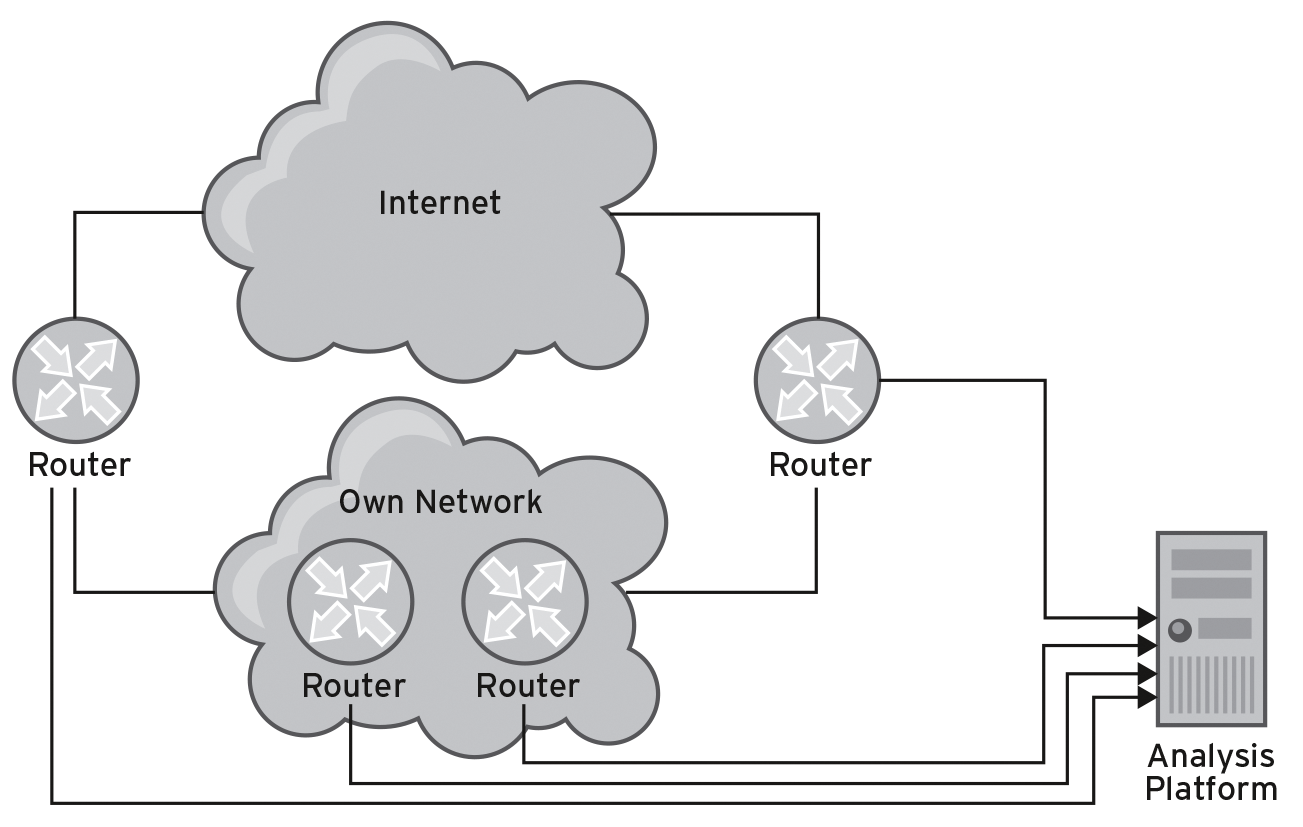

To detect DoS attacks, you can evaluate network usage data by collecting the data of routers that act as gateways via SNMP with analysis platforms such as Arbor's Peakflow [1] or Flowmon's Collector [2] or by having the information sent to you via the NetFlow or IP flow information export (IPFIX) protocol. The second choice works more precisely, because it delivers data for each individual connection, including the source and destination IP addresses, ports, and IP protocol information, so you can detect variations in the connection patterns. SNMP-only counters do not necessarily offer such options, depending on the management information base (MIB) used [3]. Figure 1 shows a typical setup that you could use to identify malicious connections. Note that collecting and providing data on the routers produces CPU load.

In most cases, the Internet connection depends on the day of the week, time of day, and role of the company using it, making it difficult to establish static detection rules. Limits are set either too narrow or too wide and thus can let an attack through. A better approach is to perform static analysis on normal traffic over a longer period of time and under normal operating conditions. The resulting rules will handle the usual performance peaks but ideally also detect attacks.

Countermeasures

The easiest defense against a DoS attack would be to block the source IP of the incoming traffic. In the case of a DDoS attack, this is not so easy. Because the first D stands for "distributed," the attacks come from many different source IPs. Two more blocking options remain: One is to block the destination IPs, and the other is to filter on the destination ports, although the attack needs to be structured appropriately for this to work.

If the attacks are only aimed at clogging the pipe, attackers often use reflection attacks, which misuse services such as DNS or NTP on the rebound by sending small requests that the services respond to with overly long responses. In their requests, the attackers enter the IP address of the victim as the sending IP address; the long responses then flood the victim's connections.

If the victim uses the services leveraged for the attack (e.g., NTP or DNS) exclusively externally or exclusively internally, a filter can block the unwanted packets. In the case of flooding via NTP, you could, for example, enter Any as the source address for the filter and the IP address of the victim as the destination using a source port of 123 and the UDP protocol. That would weed out unwanted packages without interfering with the victim's services, such as web servers.

The Thick End

Thus the theory. In practice, it is typically a hopeless cause to try to fend off an attack with 100GBps bandwidth on a 1 or 10GBps link. Until a corresponding filter protects the internal infrastructure, the line is already overloaded. Although the attack does not affect the internal servers, you will not reach anyone on the Internet.

For effective defense you need to take up a position at the thick end of the line, and you have a choice of several approaches. Cloud mitigation means the Internet provider uses the Border Gateway Protocol (BGP) to redirect all traffic through a kind of Internet washing machine before it reaches the attack victim's server.

The washing machine cleans the traffic with the help of special appliances, which are optimized for DDoS defense – in contrast to traditional firewalls – and then forwards the safe traffic through a tunnel to the victim's server. This adds some latency, but users will hardly notice it in most cases. Individual providers offer this protection directly to their customers so that a redirect is not necessary.

For larger connections that also use BGP internally, it is possible to configure a null route on the loopback device on the router for the attacked targets and to propagate this to the outside (i.e., to providers) [4]. The disadvantages are that the ISPs need to agree to this plan; the approach knocks the complete IP address off the web and all services provided through it. Usually, you also need to act on far larger network blocks, because BGP filters do not accept small routing table entries.

Intracloud

The scenarios described so far always have an outside and an inside; the detection and filtering methods described only work at the external borders of a network. If attackers misuse parts of your own infrastructure, the only way to tell is by examining the outbound traffic; however, not all solutions do this.

Under certain circumstances the situation can be more complex for operators of cloud environments. Many virtual machines (VMs) managed by customers themselves do not always have the latest patches for an infrastructure as a service (IaaS) offering. This makes them vulnerable and potential sources of a DDoS attack (e.g., via NTP reflection attacks).

For DDoS attackers, systems with fast connections in a data center are gems, because, unlike private connections, they are backed by an ADSL line with high bandwidth, meaning you need less compromised systems for an effective attack.

Therefore, attackers will conceivably look for their victims in the same cloud. The attacking machine and the victim's VM might even run on the same host. In this case, although customers can no longer reach the service, none of the external network devices (routers or switches) measure an increase in network load. The anomaly is only noticeable when you look at the virtual infrastructure. So what do you need for security?

Virtual is Better

Fortunately, virtual switches such as Open vSwitch (OvS) [5] in the environment of KVM, Xen, or OpenStack – but also the distributed switches from VMware – give you an option for exporting NetFlow data. If you want an Open vSwitch named testswitch to send flow data to the IP address 10.1.1.1: 3000, you would use the command:

ovs-vsctl -- set Bridge testswitch netflow=@nf -- --id=@nf create NetFlow targets=\"10.1.1.1:3000\" active-timeout=30

If a framework such as OpenStack is used to manage many VMs on many hosts, this function unfortunately is not integrated, so you would have to enter it manually on the individual compute nodes.

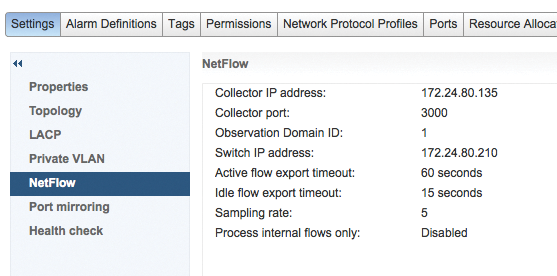

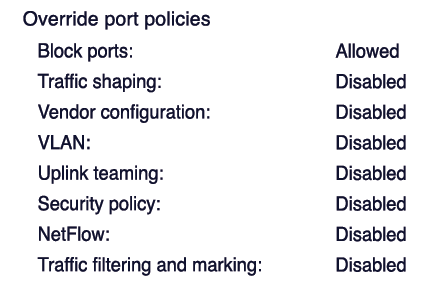

VMware splits the configuration into two parts. In the network settings, the network administrator configures a NetFlow collector for each distributed vSwitch (Figure 2). For each distributed port group, you then decide whether or not you want to send (Figure 3).

SDN

In the case of Open vSwitch, the virtual switch will, if necessary, block the attack packets. Open vSwitch supports the OpenFlow protocol (see the "OpenFlow" box), which can drop packets on the basis of certain criteria. At the command line, you can generate filters with the ovs-ofctl tool. The following example drops all packages in the direction of IP address 10.1.1.2 and on the UDP destination port 1234:

ovs-ofctl add-flow testswitch ip,nw_dst=10.1.1.2,udp,udp_dst=1234,actions=drop

As a highlight, if you operate in SDN environments, you do not need to log in individually to each device. Rather, a central point in the network takes over control. The controller manages the flows from a single source and distributes them to all relevant devices, and as the admin, you have more or less a bird's-eye view.

Flow Control

One of the most popular controllers is OpenDaylight [7]. It communicates via different protocols, including the OpenFlow protocol, with the managed devices. For the user, the controller provides a RESTful API that supports device configuration. RESTful interfaces reflect the object that the user is editing in the URL, whereas the HTTP method maps the applicable actions.

At a logical level this means, figuratively, that the call

DELETE http://<server/auto/door/frontleft/> <window>

removes the window in the left front door. When transmitting data in the above example, you describe a door, and the REST API passes in the parameters of the object in JSON notation or as XML.

The API that administrators use to manage flows in OpenDaylight resides below a node. A node is nothing more than a (virtual) switch in the OpenFlow context of OpenDaylight. OpenDaylight distinguishes two prefixes: one is used to manipulate objects, the other to query the database for existing objects. Typically, the controller listens on port 8181. The URL prefix for changing objects and for reading are

http://<server>:8181/restconf/config http://<server>:8181/restfonf/operational

respectively. To navigate the functional tree of the controller, you extend the URLs accordingly. A URL path to create a flow can look like:

http://<server>:8181/resconf/config/opendaylight-inventory:nodes/node/openflow:12345/table/0/flow/2

where opendaylight-inventory:nodes is the entry point for all objects of type node. Specifically, the node type is openflow and has an ID of 12345. To add more protocol entries to this table, you could replace the openflow prefix before the node ID.

The switch has a name, followed by the term table, and then the index of the table. You should keep in mind that OpenFlow as of version 1.3 manages several tables with flows; thus, the flow can jump to another table and continue there. At the end of the URL the flow entry has a unique ID.

The River Flows

To create a new flow, you use the PUT method in JSON or XML format that defines what the flow filters and what action it should take on success. The OpenFlow implementation limits the filter options on the executing machine. Depending on the version of OpenFlow, you can filter for all sorts of things, from the Ethernet level to flags in the TCP header.

If you are looking to fend off a DDoS attack, you need to filter for both IPv4 and IPv6 addresses, the IP protocol, and the destination port. With OpenFlow, you not only can drop packets, but change addresses and virtual local area networks (VLANs) and set the output port – and these are just some of the many options.

Rate limiting is interesting for DDoS defense and is one of the meters mentioned earlier. Unfortunately Open vSwitch does not support it; in this case only the DROP action remains. In the logic of OpenFlow, dropping a packet is a filter without an action. The JSON syntax for doing this is shown in the example in Listing 1.

Listing 1: Dropping Packets; JSON Syntax

01 {

02 "flow": {

03 "id": "5", "match": {

04 "ethernet-match": {

05 "ethernet-type": {

06 "type": "0x0800"

07 }

08 }, "ip-match": {

09 "ip-protocol": 17

10 }, "ipv4-destination": "10.1.1.2/32", "udp-destination-port": 1234

11 }, "table_id": 0, "priority": 10

12 }

13 }

This flow definition has the same effect as the example shown earlier with ovs-ofctl; however, note that to classify IP packets, the definition must contain the appropriate Ethernet type (e.g., for IPv6, this would be 0x86dd). To describe UDP packets, you need to state the IP protocol. It would make sense for the controller to make useful assumptions when you type in the port definition, but unfortunately this is not the case.

Flow managers must also state the IP address with a mask of /32 if it is a single address. The id and table_id fields are another special case. They match the path in the URL; otherwise, the controller will accept the flow without protest but will not create it. In other words, this flow for OpenFlow node 1 works exclusively on the following URL:

http://<server>:8181/restconf/config/opendaylight-inventory:nodes/node/openflow:1/table/0/flow/5

A final important field is priority, on the basis of which OpenFlow-enabled switches are sorted. The higher it is, the more important the flow. Usually admins pass a flow to the Open vSwitch switches with a NORMAL action, which means: "Behave like a priority 1 switch." If filters need to override this behavior, they need a higher priority.

Out!

Several routes are open for uploading this definition, including a program in your favorite programming language. Because of the simple structure, you do not even need a REST library, simply one with HTTP support. A REST client browser plugin is useful for testing individual requests. Postman is a very powerful candidate (Figure 4) for Chrome [8], and Curl is suitable for uploading data (Listing 2). This call will succeed if the JSON definition of the flow exists in the drop.json file (line 5).

Listing 2: Curl Access

01 curl -u admin:admin 02 -H "Accept: application/xml" 03 -H "Content-Type: application/json" 04 -X PUT 05 -d @drop.json http://ODL:8181/restconf/config/opendaylight-inventory:nodes/node/openflow:1/table/0/flow/5

Thanks to the -d option, Curl either accepts a string with data, or it reads the data from the file after the @. The -X parameter is passed to the HTTP method, and -u <username>:<password> handles authorization. The -H "Content-Type: application/json" option tells the server you want to transfer the data in JSON format.

Putting It All Together

The pieces of the puzzle for detecting and preventing attacks in a cloud infrastructure are now all available; you just need to put them together. The commercial Flowmon Collector [2] by the Czech company Flowmon (formerly Invea) analyzes NetFlow data and creates meaningful reports from it. Under the hood it relies on nfdump and nfsen, two open source tools. Other applications then jump on the collected data and perform evaluation according to specific criteria. One of these Flowmon applications is named "DDoS Defender."

You need to define a training period for subnets that you want to monitor for DDoS activity. When this has expired, a rule then determines when the software triggers actions; for example, when a certain value is exceeded by more than 300 percent. Actions can include sending email, creating SNMP traps and syslog messages, and setting up redirects via BGP and shell scripts. The latter option can in turn be used to start a script that instructs the OpenDaylight controller to enable filtering flows.

DDoS Defender can trigger the action when it detects an attack, or after it has collected data about the attack, so you know which IP addresses and ports play a role in the attack. A script then reads the data from the environment that is passed in to it. On this basis, and depending on the desired behavior, it builds a filter and distributes it independently to the appropriate virtual switches.

Conclusions

As a component in SDN, OpenFlow controls traffic flows [9]. Even if it is (still) not widely used as a protocol on network hardware, apart from white-box operating systems such as PicOS [10] or switches by Corsa [11], it is used by default in environments with Open vSwitch.

As I have shown in this article, the OpenFlow technology can also be used for security. Rather than dropping packets, it would also be possible to route them via OpenFlow and separate benign from malicious traffic to a kind of network washing machine. At the same time, OpenFlow reveals attacks between virtual hosts that might otherwise remain hidden to the administrator.