The utility of native cloud applications

Partly Cloudy

Many standard applications are very difficult to roll out in a cloud, and the more specific the use case (i.e., software developed in your own organization years ago and adapted to your requirements), the more likely it will run only within its original environment.

Native cloud applications distinguish themselves from traditional applications, in that you can roll them out easily in almost any cloud stack and automatically leverage the available services. In this article, I consider the question of how clouds differ from traditional virtualization and how important these differences are for software that you want to run in a cloud. I also look into what programmers should consider from the outset to prepare an application for use in clouds.

Flexibility Is a Prerequisite

In the cloud community, they say, it is all about cats and cattle. Cats are classical physical or virtual systems that the admin sets up manually, with great attention to detail: A cat is raised by hand, it is given a name, and goes to the vet if it is sick. The corresponding virtual machines (VMs) are also unique and given special treatment. They cannot be recovered easily, for example, because they contain transactional data. Typically when they break, at least part of the entire platform grinds to a standstill. You can't stretch the metaphor beyond this point, because providers typically design special-purpose VMs of this type redundantly, so they survive even the failure of critical infrastructure, such as the hardware.

Clouds need more flexibility. Each VM is ideally the result of a defined and precisely reproducible process, in the course of which it is generated automatically. At any time, it is possible to expand an existing virtual instance, adding another instance of the same type with the same configuration.

This requirement accommodates the very limited likelihood of the availability of individual services. If you run 1,000 or more computers as part of a cloud, you can't set up each individual VM to be redundant without skyrocketing costs. Instead, it is the admin's responsibility with such platforms to keep each VM as generic as possible and to construct the environment so that the failure of individual instances does not lead to failure of the entire platform.

In this context, the comparison with cattle becomes understandable: In a herd of cattle, the animals typically have no names, just a tag in their ear with a number, so it is no longer about the individual animal, but simply the result produced by the group of animals.

IaaS Is Just a Crutch

If companies migrate existing software to the cloud, the goal is typically infrastructure as a service (IaaS; Figure 1); in other words, an application already deployed in a department or at a customer site has simply migrated from VMs in the data center to other VMs, either in the corporate data center or in a cloud operated by a public provider. After the migration, the benefits of this data center are available to the applications in the cloud, such as the ability to launch new virtual instances quickly. The same process might have taken weeks before, because hardware would have to have been purchased.

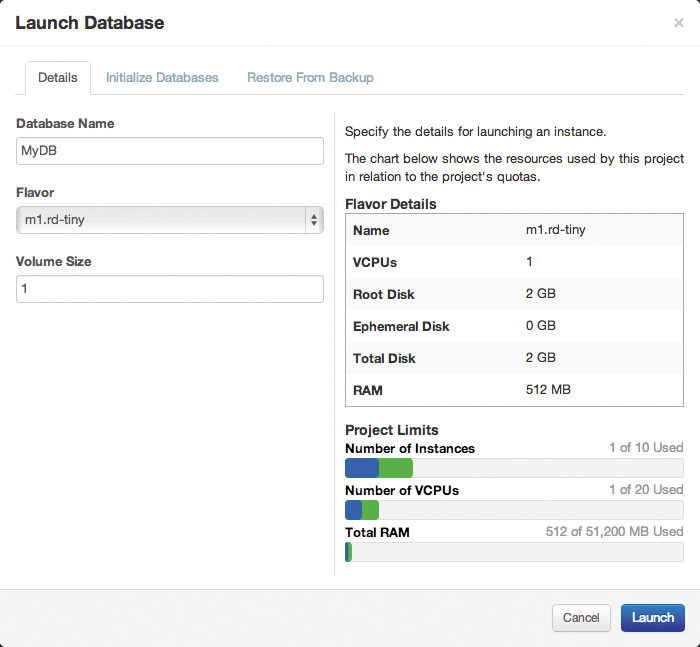

Soon the admin in charge will notice that virtually all major clouds – whether public or private – have different services on offer besides IaaS, such as databases as a service (DBaaS), wherein the cloud provider relieves the customer of the responsibility of operating a database, such as MySQL (Figure 2). Using their own interface or an orchestration solution, the customer clicks to compose a virtual database and receives an IP and login information at the end of the process. The customer uses the credentials in this application and then simply shuts down their own VM with MySQL. This process is a typical example of how users leverage generic services in a cloud.

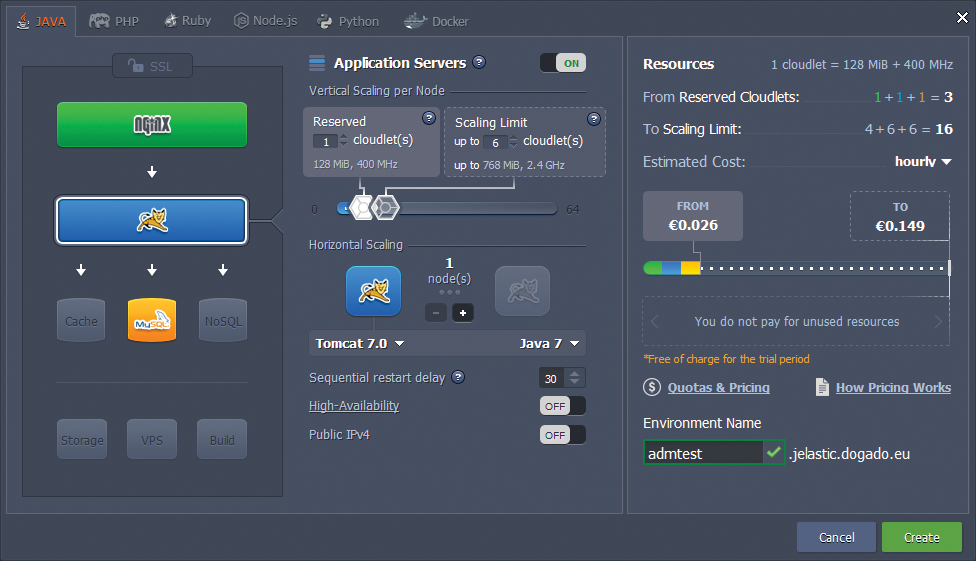

The same is true with Platform as a Service (PaaS), which even eliminates the operating system as a component for the user to manage. With PaaS, the customer can use a complete, ready-for-action environment (Figure 3), such as one in which they can run PHP websites or in which C++ programs work. The customer simply installs their application in this environment and – hey presto – it is ready for use.

At this point, it often becomes painfully obvious where the differences between a native cloud application and one from the classical world lie: Classical apps require individually tailored libraries or interfaces or are rigidly linked to external services such as databases. The most conventional systems tend to be monolithic, with one big program, rather than the many small components you find in a PaaS environment.

Twelve Rules

How should companies develop new applications or rebuild existing ones, to operate them easily and seamlessly in clouds and PaaS environments yet ensure that they scale and cope well with the limited availability guaranteed in the cloud?

The Twelve-Factor App [1] provides important information on this topic. This methodology, written by Adam Wiggins, lists 12 rules that app developers should adopt. The first concern is how the programmer should deal with their code.

Central Code

In a classical setup, the admin typically copies an app from server to server as a tarball and then individually extracts each. In clouds, this approach is not practical. Preferably, all of the required code is kept in a version control system (e.g., Git), so it can be checked out anywhere. Version control is especially useful if you want to roll out different setups in different clouds with the same source code. At the same time, this process ensures that everyone really does use the same source code.

Another condition must be met: The source code of the application must not contain customer-specific details or configuration files for a particular setup. In clouds, the basic expectation is that the configuration comes from the environment. You can achieve this in different ways. One way is to have a configuration database running in the background (e.g., Etcd, Consul, or Fleetd) that is rolled out along with the application and fed the required data. The application then connects to this service to retrieve its data.

Another way is to save the configuration data in the metadata of the cloud environment. All major clouds offer the option of defining metadata when you create VMs. The metadata must contain all the information required for operating the platform, and the application must retrieve that information accordingly. Amazon – or clouds that seek to be compatible with Amazon – solve the problem with cloud-init. When a cloud VM boots, the tool connects to the pseudo-address http://169.254.169.254 and reads the stored metadata. An application on the VM can use the same approach.

For the application to be executable on as many vanilla VMs as possible, you also need to define its dependencies cleanly: For example, to roll out automatically, a PHP application has to ensure that all required PHP modules are installed. The same applies to virtually all scripting and programming languages.

Services in the Background

Most web applications are dependent on other services (e.g., MySQL), but because the application itself has no influence on these services, Wiggins assumes that it does not need to worry about them. Therefore, the admin's task becomes providing a working MySQL database for the application. The app receives the information about where to find the database, along with the configuration, as previously described.

Wiggins takes this one step further: Native apps for the cloud basically make no assumptions at all about how the physical environment in which they run is designed, including the concrete implementation of services, such as persistent storage, or the virtual network. The behavior of the application is affected in different places, such as the logs: Conventional applications write their logs to files. Instead, Wiggins basically urges developers to write all log output to stdout and then let the surrounding platform decide where this output goes.

Dynamic Development

The need for cloud compatibility affects the way applications are developed. The principle is simple: Strictly separate development from the operation of a solution. The use of development versions is thus ruled out in production operations.

The principle of "release early, release often" also applies: The delta between production and the current development version should be as small as possible. Multiple rollouts per day are therefore preferable to a large rollout every six months. In the same category, within an app, any system should be replaceable at any time by a new version of itself.

Scalability as a Prerequisite

Wiggins' model also stipulates that cloud applications must scale horizontally without limits. For example, if five web servers are performing their service on the platform at any given time, it should be possible to double this number immediately. Finally, the admin no longer influences scaling in PaaS, which is now the responsibility of the provider.

The Next Step: Microservices

Conventional applications are designed mostly as monolithic software: One large program offers all the functions and typically follows a Tier 3 design [2]. Many individual modules provide the desired functionality within the software, but a module cannot be used meaningfully without the application and is not executable as a standalone product.

Under these conditions, scalability becomes a problem: Even if only some of the functions used in the program need more instances, the entire monolith must be executed multiple times. Moreover, such applications are prone to error, a service in a monolithic PaaS environment is simply not available if it fails.

Such monolithic software is very difficult to handle, as well. Development can be slow because writing and subsequently testing the code requires a lot of time; thus, it implicitly violates some of the cited 12 rules. Although appropriate for classical applications, conventional applications are simply a nightmare to operate in a cloud environment.

Microservices Are Extremely Modular

Because developers no longer build just one large application, any function that might be a module in monolithic software becomes a separate service. In a microservice architecture, services must communicate with each other via a fixed protocol (e.g., APIs), leading to overhead. To compensate, the design ensures maximum flexibility: If individual services need to deal with more load, the microservice architecture allows only those services needed multiple times to launch multiple times – not the whole program.

Internal redundancy requirements can be met more advantageously with microservices, as well, because if a single sub-service fails, and the application is properly programmed, another instance of the same service simply takes over its tasks.

More Overhead from Many Standards

Although the experts currently largely agree on how native cloud applications differ from conventional programs, the PaaS example on one hand and Docker, Kubernetes, and their kin on the other hand make one thing clear: A gold standard for the operation of native cloud applications does not exist so far, and it is particularly evident in the question of how the operation and how the setup of such applications should look.

From the developer's point of view, distributing an application in prebuilt containers is a convenient approach. However, it does require the customer to find a suitable platform on which the corresponding container will run, which is not currently possible with any of the large public cloud providers, because they have their own ideas of how native apps are to be integrated into their platforms.

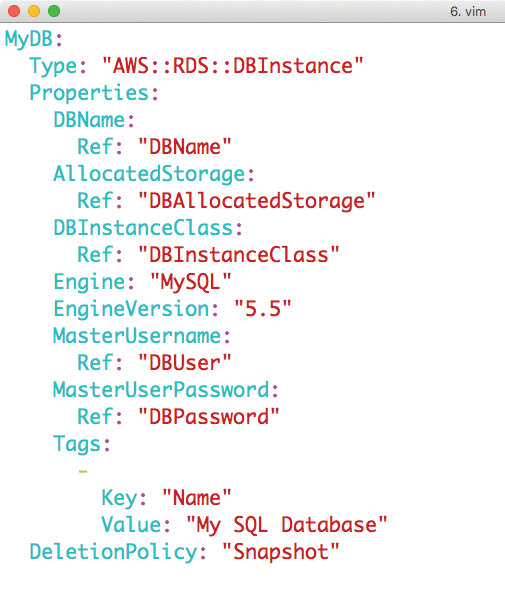

As a prime example, Amazon offers so many features in its AWS cloud, that newcomers quickly lose track. From genuine PaaS through semiautomatic platform rollouts via Cloud Formation (Amazon's proprietary cloud orchestration service), almost all variants are conceivable. Microsoft's Azure is similar. If you instead choose a public cloud with OpenStack, you will need to adapt your deployment to specific conditions.

The MySQL example makes this clear: Most popular applications assume that access to some kind of database is available. The conventional approach is to launch a private MySQL on a separate VM on an IaaS basis. Significantly closer to the spirit of PaaS would be to leverage a database specially provided by the cloud (i.e., DBaaS).

If you want to roll out such a setup on Azure, AWS, or OpenStack, though, you will find yourself doing battle with three different orchestration tools (Figure 4). Although the application perhaps approaches a native app, deployment of the software is complicated and is strongly dependent on the selected target platform.

Greetings from the Container Universe

A sure indicator of microservices winning the day is evident if you take a look into the world of containers. For years, Docker, in particular, has been forging ahead in the same direction as native cloud applications: Docker allows developers to package their applications so that other users can simply download and deploy prebuilt containers.

Many practical examples show that admins should not do this: A huge Docker container with a LAMP stack, in which the admin has made untraceable, manual changes, is a disaster in terms of operation and maintenance. Because Apache, MySQL, and other databases are not native cloud apps, you will have to make trade-offs in many places.

Native cloud applications leverage containers in a better way: A properly optimized workflow allows program authors to package the individual microservices of a cloud application cleanly in standard containers. In the best case, automatically creating appropriate container images will be part of the release process: Whereas the customary process of creating software typically produces a tarball as the final release, a standardized Docker container could just as easily be the result of the release process for native cloud applications.

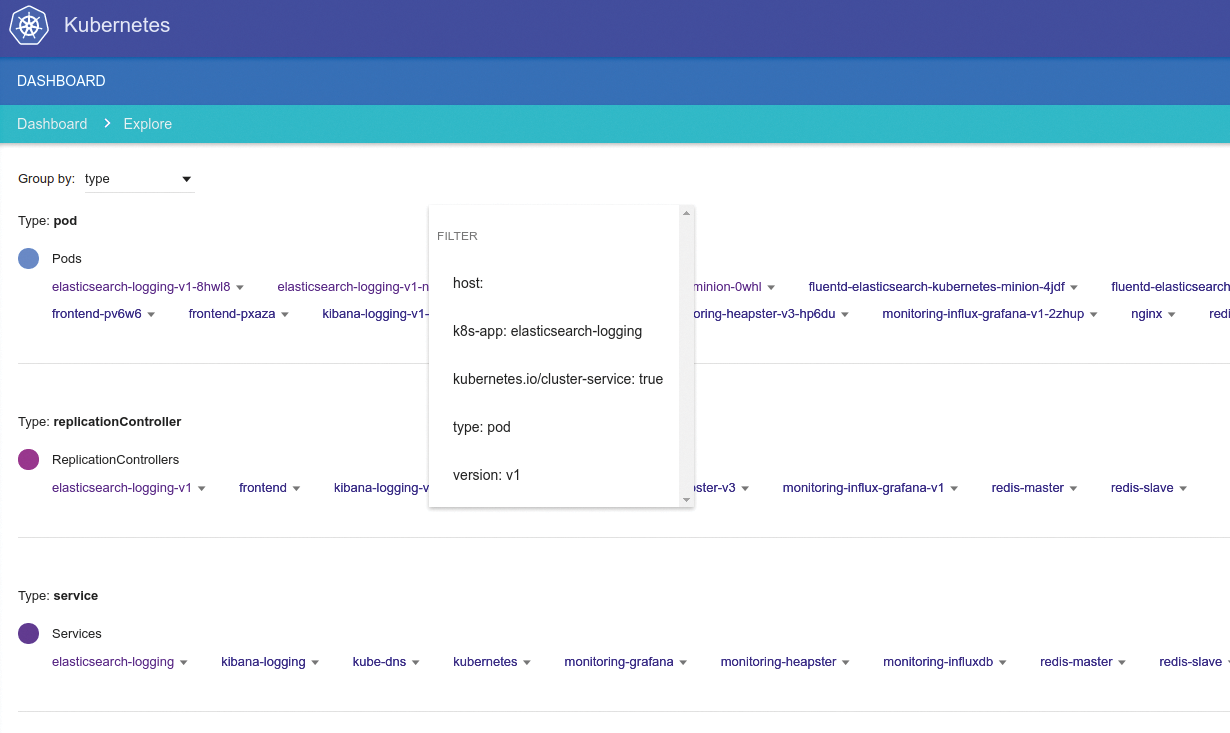

On platforms like Kubernetes or Docker Swarm, rolling out the relevant application then becomes child's play: Kubernetes, in particular, with its principle of pods and individual containers, is made for native cloud applications (Figure 5). Unlike with PaaS, developers have to take care of a part of the operating system. On the other hand, you can guarantee that the entire environment will roll out on any platform compatible with Kubernetes or Docker without additional overhead.

Conclusions

Changes in IT are usually lengthy processes. The cloud itself is the best example. Although several cloud offerings have existed for years, companies are only gradually switching to the new system, and native cloud applications will foreseeably take many years to spread globally.

Applications that have been built especially for clouds are different in many ways from their conventional predecessors and make optimum use of the services typically offered in clouds. Cloud providers and the companies that operate a solution in the cloud have been dealt new cards: From the corporate perspective, the single virtual instance plays virtually no role. Customer expectations of a cloud provider are far more likely to be that they offer the necessary services as PaaS.

In a win-win scenario, the supplier can rely on appropriate standards and thus noticeably facilitate the development and maintenance of the system. In return, customers achieve considerably more flexibility: An application that runs in one cloud will run in another, although still with some imperfections. The ideal state for a software author would be a single container image or a single version of their software to roll out and support in any environment, but so far, the current offerings lack standardization.

The principle of native cloud applications is thus only at the beginning of its story, but with the spread of the cloud and the increasingly powerful services offered by public cloud providers, the principle will definitely pick up speed in the future. Both providers and customers have a strong interest in speeding up the process.